5.2 KiB

Grounding DINO

Official pytorch implementation of Grounding DINO, a stronger open-set object detector. Code is available now!

Highlight

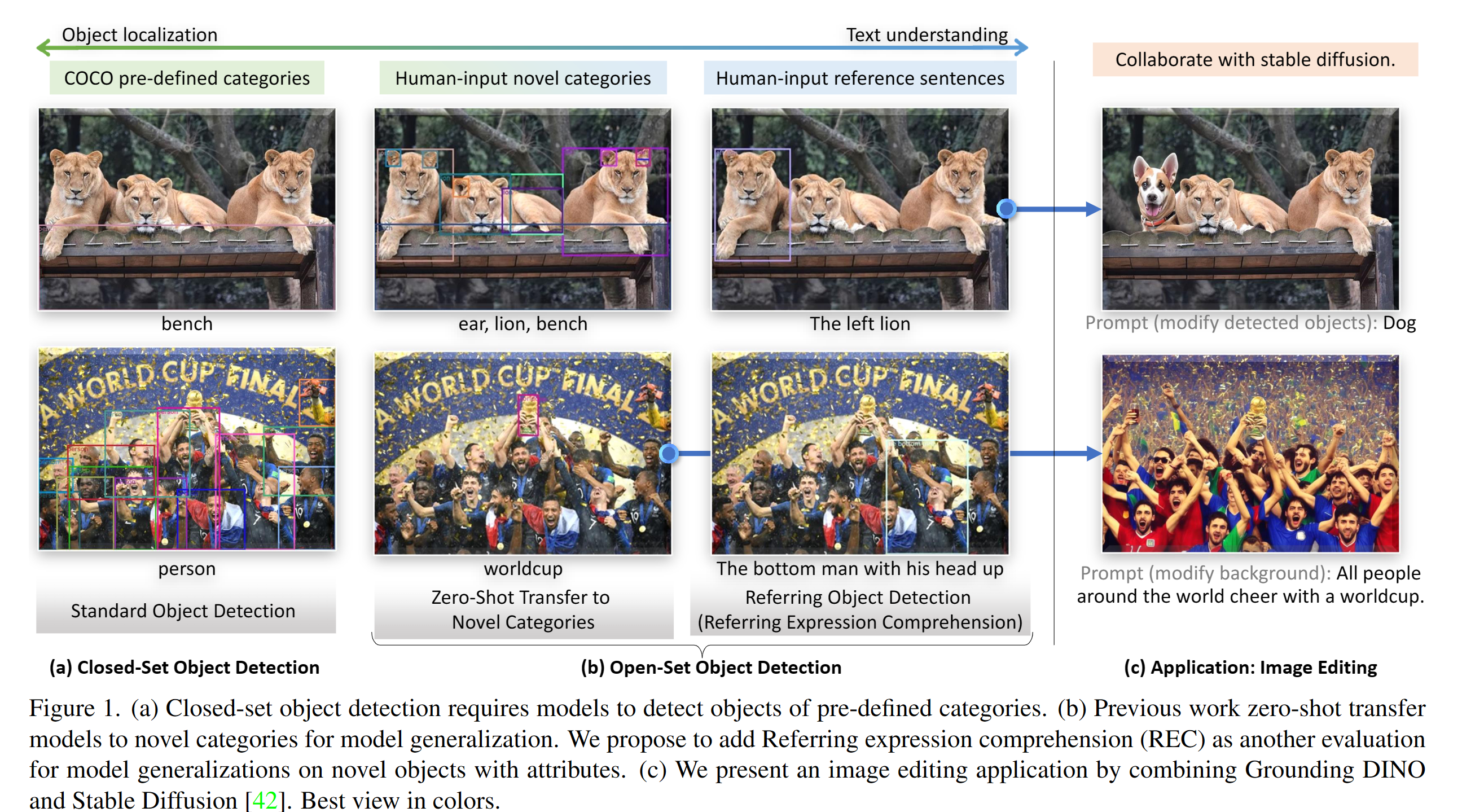

- Open-Set Detection. Detect everything with language!

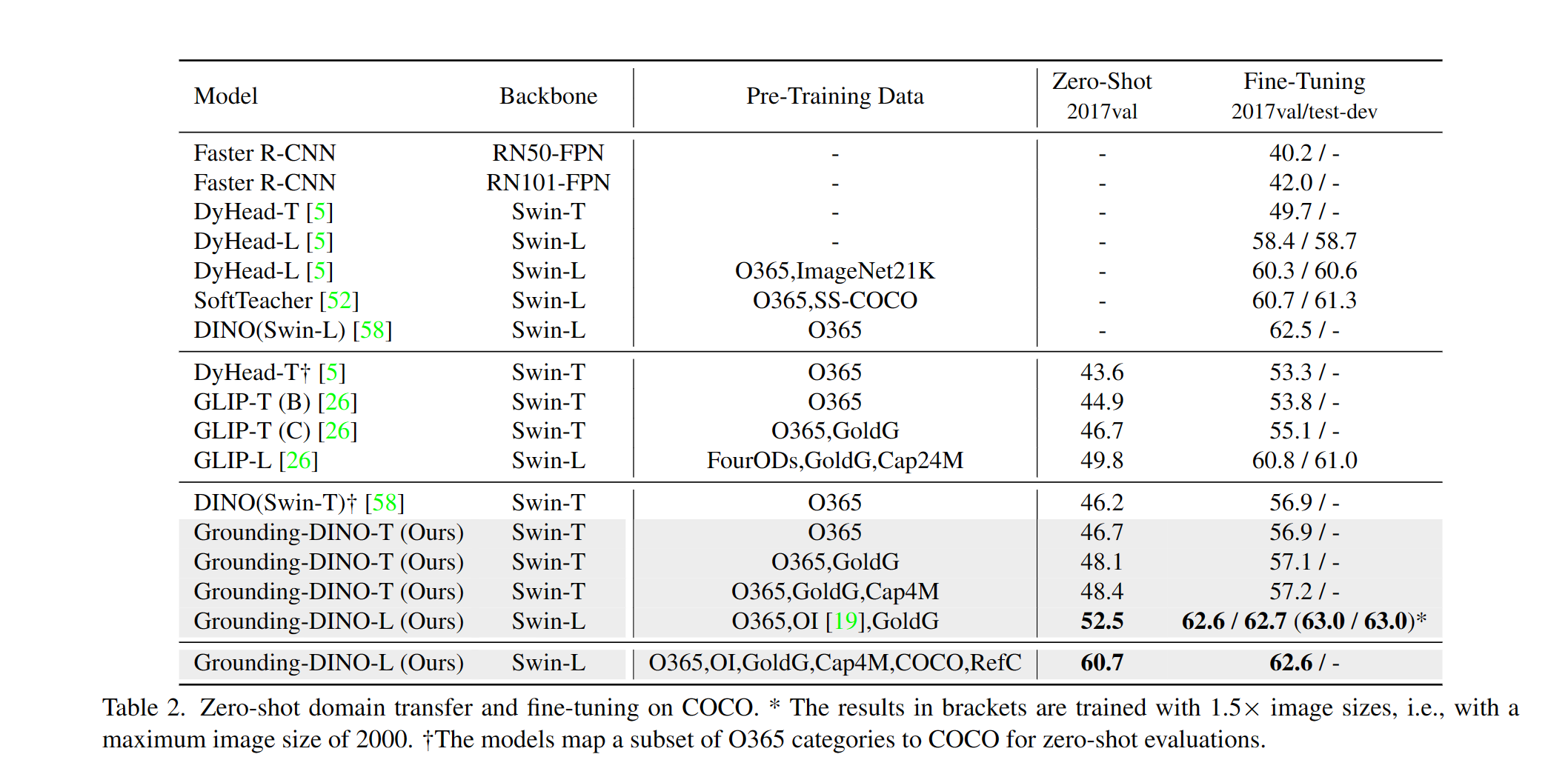

- High Performancce. COCO zero-shot 52.5 AP (training without COCO data!). COCO fine-tune 63.0 AP.

- Flexible. Collaboration with Stable Diffusion for Image Editting.

Description

TODO

- Release inference code and demo.

- Release checkpoints.

- Grounding DINO with Stable Diffusion and GLIGEN demos.

Install

If you have a CUDA environment, please make sure the environment variable CUDA_HOME is set.

pip install -e .

Demo

See the demo/inference_on_a_image.py for more details.

CUDA_VISIBLE_DEVICES=6 python demo/inference_on_a_image.py \

-c /path/to/config \

-p /path/to/checkpoint \

-i .asset/cats.png \

-o "outputs/0" \

-t "cat ear."

Checkpoints

| name | backbone | Data | box AP on COCO | Checkpoint | |

|---|---|---|---|---|---|

| 1 | GroundingDINO-T | Swin-T | O365,GoldG,Cap4M | 48.4 (zero-shot) / 57.2 (fine-tune) | link |

Results

COCO Object Detection Results

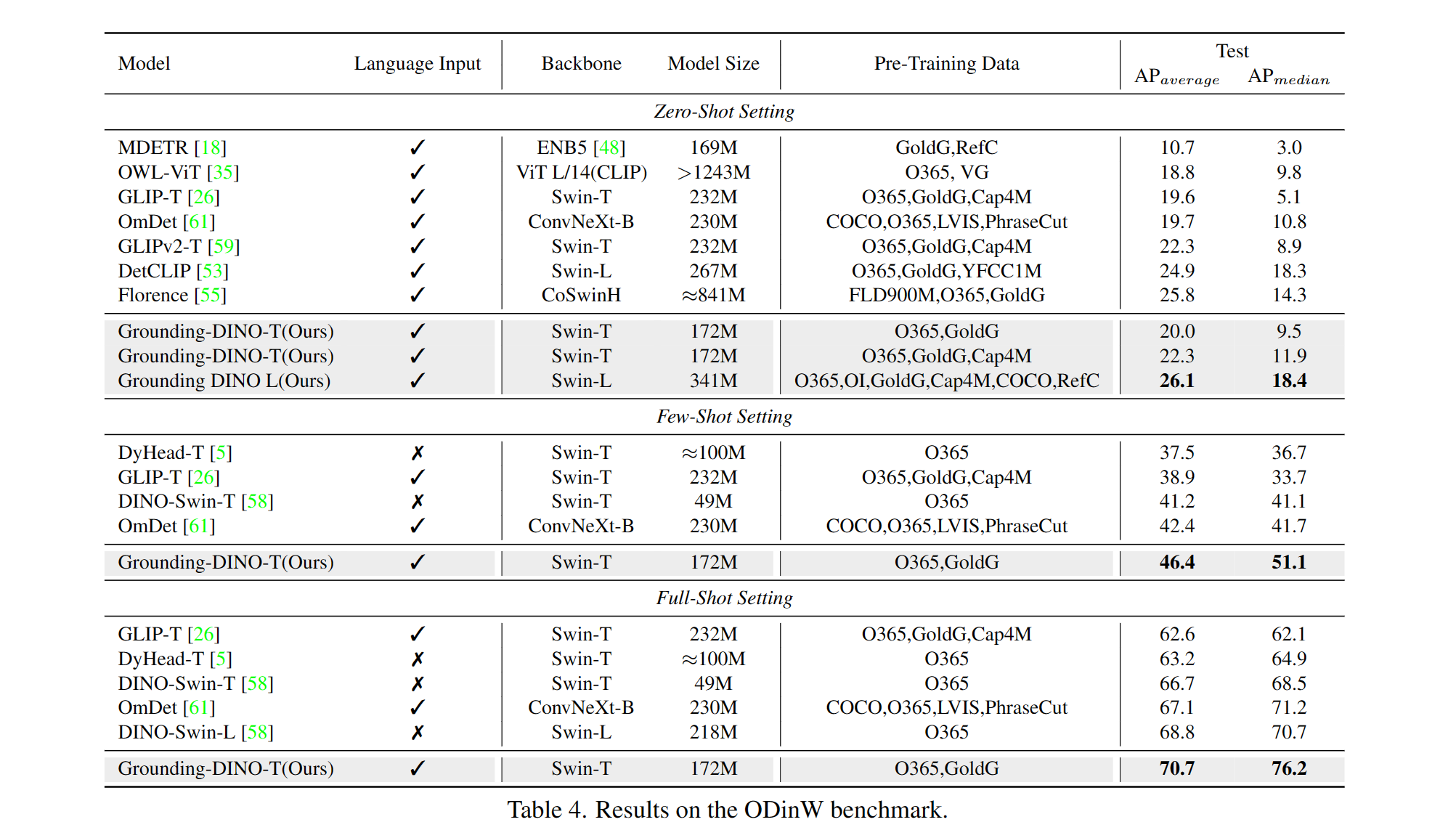

ODinW Object Detection Results

Marrying Grounding DINO with Stable Diffusion for Image Editing

Marrying Grounding DINO with GLIGEN for more Detailed Image Editing

Model

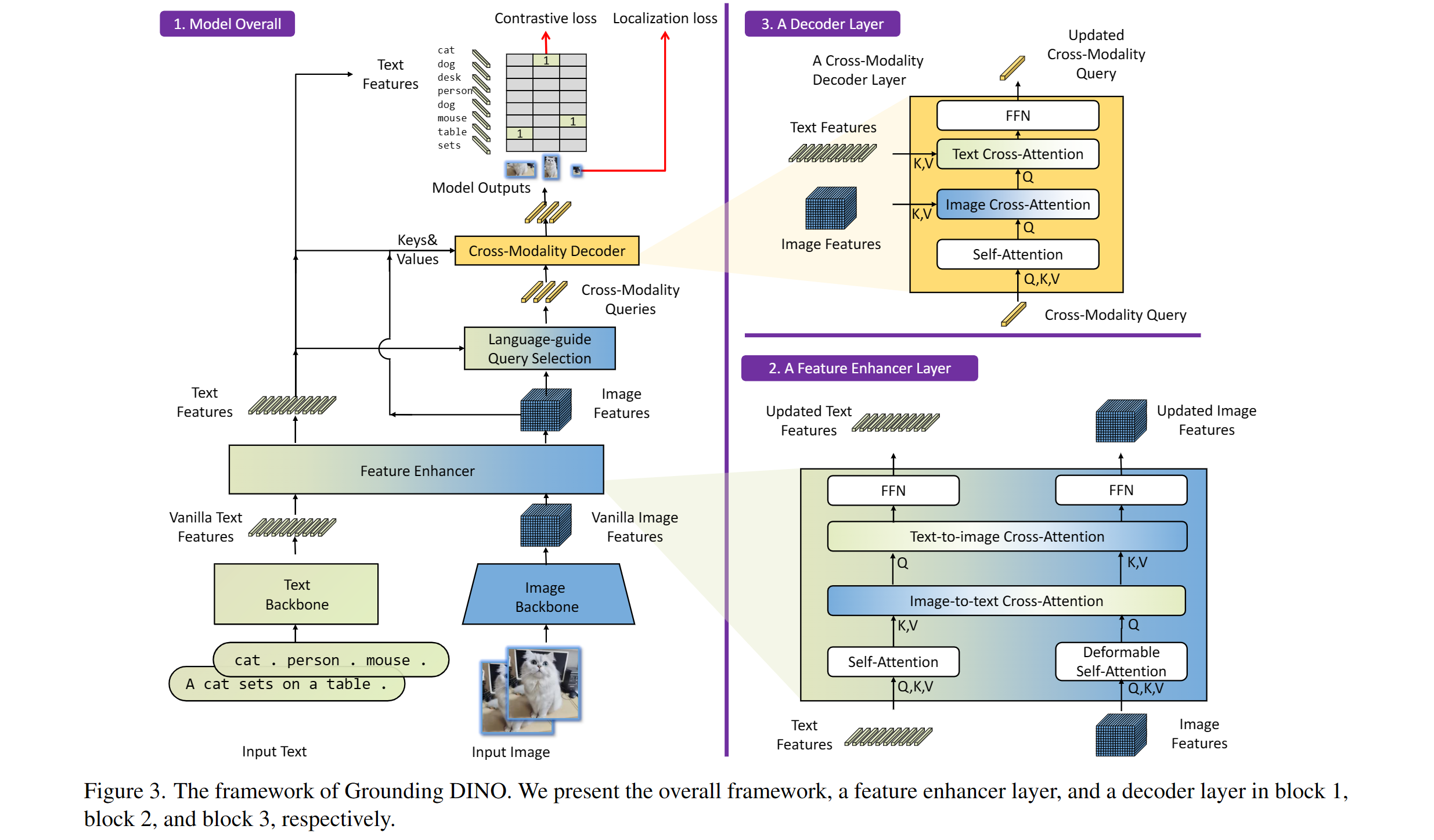

Includes: a text backbone, an image backbone, a feature enhancer, a language-guided query selection, and a cross-modality decoder.

Acknowledgement

Our model is related to DINO and GLIP. Thanks for their great work!

We also thank great previous work including DETR, Deformable DETR, SMCA, Conditional DETR, Anchor DETR, Dynamic DETR, DAB-DETR, DN-DETR, etc. More related work are available at Awesome Detection Transformer. A new toolbox detrex is available as well.

Thanks Stable Diffusion and GLIGEN for their awesome models.

Citation

If you find our work helpful for your research, please consider citing the following BibTeX entry.

@inproceedings{ShilongLiu2023GroundingDM,

title={Grounding DINO: Marrying DINO with Grounded Pre-Training for Open-Set Object Detection},

author={Shilong Liu and Zhaoyang Zeng and Tianhe Ren and Feng Li and Hao Zhang and Jie Yang and Chunyuan Li and Jianwei Yang and Hang Su and Jun Zhu and Lei Zhang},

year={2023}

}