👋 Hello @${{ github.actor }}, thank you for submitting an Ultralytics YOLOv8 🚀 PR! To allow your work to be integrated as seamlessly as possible, we advise you to:

👋 Hello @${{ github.actor }}, thank you for submitting an Ultralytics 🚀 PR! To allow your work to be integrated as seamlessly as possible, we advise you to:

- ✅ Verify your PR is **up-to-date** with `ultralytics/ultralytics` `main` branch. If your PR is behind you can update your code by clicking the 'Update branch' button or by running `git pull` and `git merge main` locally.

- ✅ Verify all YOLOv8 Continuous Integration (CI) **checks are passing**.

- ✅ Update YOLOv8 [Docs](https://docs.ultralytics.com) for any new or updated features.

- ✅ Verify all Ultralytics Continuous Integration (CI) **checks are passing**.

- ✅ Update Ultralytics [Docs](https://docs.ultralytics.com) for any new or updated features.

- ✅ Reduce changes to the absolute **minimum** required for your bug fix or feature addition. _"It is not daily increase but daily decrease, hack away the unessential. The closer to the source, the less wastage there is."_ — Bruce Lee

See our [Contributing Guide](https://docs.ultralytics.com/help/contributing) for details and let us know if you have any questions!

issue-message:|

👋 Hello @${{ github.actor }}, thank you for your interest in Ultralytics YOLOv8 🚀! We recommend a visit to the [Docs](https://docs.ultralytics.com) for new users where you can find many [Python](https://docs.ultralytics.com/usage/python/) and [CLI](https://docs.ultralytics.com/usage/cli/) usage examples and where many of the most common questions may already be answered.

👋 Hello @${{ github.actor }}, thank you for your interest in Ultralytics 🚀! We recommend a visit to the [Docs](https://docs.ultralytics.com) for new users where you can find many [Python](https://docs.ultralytics.com/usage/python/) and [CLI](https://docs.ultralytics.com/usage/cli/) usage examples and where many of the most common questions may already be answered.

If this is a 🐛 Bug Report, please provide a [minimum reproducible example](https://docs.ultralytics.com/help/minimum_reproducible_example/) to help us debug it.

If this is a custom training ❓ Question, please provide as much information as possible, including dataset image examples and training logs, and verify you are following our [Tips for Best Training Results](https://docs.ultralytics.com/guides/model-training-tips//).

If this is a custom training ❓ Question, please provide as much information as possible, including dataset image examples and training logs, and verify you are following our [Tips for Best Training Results](https://docs.ultralytics.com/guides/model-training-tips/).

Join the vibrant [Ultralytics Discord](https://ultralytics.com/discord) 🎧 community for real-time conversations and collaborations. This platform offers a perfect space to inquire, showcase your work, and connect with fellow Ultralytics users.

Join the Ultralytics community where it suits you best. For real-time chat, head to [Discord](https://ultralytics.com/discord) 🎧. Prefer in-depth discussions? Check out [Discourse](https://community.ultralytics.com). Or dive into threads on our [Subreddit](https://reddit.com/r/ultralytics) to share knowledge with the community.

diff = response.text if response.status_code == 200 else f"Failed to get diff: {response.content}"

# Get summary

messages = [

{

"role": "system",

"content": "You are an Ultralytics AI assistant skilled in software development and technical communication. Your task is to summarize GitHub releases in a way that is detailed, accurate, and understandable to both expert developers and non-expert users. Focus on highlighting the key changes and their impact in simple and intuitive terms."

},

{

"role": "user",

"content": f"Summarize the updates made in the '{latest_tag}' tag, focusing on major changes, their purpose, and potential impact. Keep the summary clear and suitable for a broad audience. Add emojis to enliven the summary. Reply directly with a summary along these example guidelines, though feel free to adjust as appropriate:\n\n"

f"## 🌟 Summary (single-line synopsis)\n"

f"## 📊 Key Changes (bullet points highlighting any major changes)\n"

f"## 🎯 Purpose & Impact (bullet points explaining any benefits and potential impact to users)\n"

f"\n\nHere's the release diff:\n\n{diff[:300000]}",

<ahref="https://console.paperspace.com/github/ultralytics/ultralytics"><imgsrc="https://assets.paperspace.io/img/gradient-badge.svg"alt="Run Ultralytics on Gradient"></a>

<ahref="https://colab.research.google.com/github/ultralytics/ultralytics/blob/main/examples/tutorial.ipynb"><imgsrc="https://colab.research.google.com/assets/colab-badge.svg"alt="Open Ultralytics In Colab"></a>

@ -21,7 +22,7 @@

[Ultralytics](https://ultralytics.com) [YOLOv8](https://github.com/ultralytics/ultralytics) is a cutting-edge, state-of-the-art (SOTA) model that builds upon the success of previous YOLO versions and introduces new features and improvements to further boost performance and flexibility. YOLOv8 is designed to be fast, accurate, and easy to use, making it an excellent choice for a wide range of object detection and tracking, instance segmentation, image classification and pose estimation tasks.

We hope that the resources here will help you get the most out of YOLOv8. Please browse the YOLOv8 <ahref="https://docs.ultralytics.com/">Docs</a> for details, raise an issue on <ahref="https://github.com/ultralytics/ultralytics/issues/new/choose">GitHub</a> for support, and join our<ahref="https://ultralytics.com/discord">Discord</a> community for questions and discussions!

We hope that the resources here will help you get the most out of YOLOv8. Please browse the YOLOv8 <ahref="https://docs.ultralytics.com/">Docs</a> for details, raise an issue on <ahref="https://github.com/ultralytics/ultralytics/issues/new/choose">GitHub</a> for support, questions, or discussions, become a member of the Ultralytics<ahref="https://ultralytics.com/discord">Discord</a>, <ahref="https://reddit.com/r/ultralytics">Reddit</a> and <ahref="https://community.ultralytics.com">Forums</a>!

To request an Enterprise License please complete the form at [Ultralytics Licensing](https://ultralytics.com/license).

@ -277,7 +278,7 @@ Ultralytics offers two licensing options to accommodate diverse use cases:

## <divalign="center">Contact</div>

For Ultralytics bug reports and feature requests please visit [GitHub Issues](https://github.com/ultralytics/ultralytics/issues), and join our [Discord](https://ultralytics.com/discord) community for questions and discussions!

For Ultralytics bug reports and feature requests please visit [GitHub Issues](https://github.com/ultralytics/ultralytics/issues). Become a member of the Ultralytics [Discord](https://ultralytics.com/discord), [Reddit](https://reddit.com/r/ultralytics), or [Forums](https://community.ultralytics.com) for asking questions, sharing projects, learning discussions, or for help with all things Ultralytics!

<ahref="https://console.paperspace.com/github/ultralytics/ultralytics"><imgsrc="https://assets.paperspace.io/img/gradient-badge.svg"alt="Run on Gradient"></a>

<ahref="https://colab.research.google.com/github/ultralytics/ultralytics/blob/main/examples/tutorial.ipynb"><imgsrc="https://colab.research.google.com/assets/colab-badge.svg"alt="Open In Colab"></a>

- For detailed deployment guidance, consult the [MkDocs documentation](https://www.mkdocs.org/user-guide/deploying-your-docs/).

@ -115,7 +115,7 @@ Choose a hosting provider and deployment method for your MkDocs documentation:

We cherish the community's input as it drives Ultralytics open-source initiatives. Dive into the [Contributing Guide](https://docs.ultralytics.com/help/contributing) and share your thoughts via our [Survey](https://ultralytics.com/survey?utm_source=github&utm_medium=social&utm_campaign=Survey). A heartfelt thank you 🙏 to each contributor!

@ -126,7 +126,7 @@ Ultralytics Docs presents two licensing options:

## ✉️ Contact

For bug reports and feature requests, navigate to [GitHub Issues](https://github.com/ultralytics/docs/issues). Engage with peers and the Ultralytics team on [Discord](https://ultralytics.com/discord) for enriching conversations!

For Ultralytics bug reports and feature requests please visit [GitHub Issues](https://github.com/ultralytics/ultralytics/issues). Become a member of the Ultralytics [Discord](https://ultralytics.com/discord), [Reddit](https://reddit.com/r/ultralytics), or [Forums](https://community.ultralytics.com) for asking questions, sharing projects, learning discussions, or for help with all things Ultralytics!

@ -53,7 +53,7 @@ To train a YOLO model on the Caltech-101 dataset for 100 epochs, you can use the

The Caltech-101 dataset contains high-quality color images of various objects, providing a well-structured dataset for object recognition tasks. Here are some examples of images from the dataset:

The example showcases the variety and complexity of the objects in the Caltech-101 dataset, emphasizing the significance of a diverse dataset for training robust object recognition models.

@ -64,7 +64,7 @@ To train a YOLO model on the Caltech-256 dataset for 100 epochs, you can use the

The Caltech-256 dataset contains high-quality color images of various objects, providing a comprehensive dataset for object recognition tasks. Here are some examples of images from the dataset ([credit](https://ml4a.github.io/demos/tsne_viewer.html)):

The example showcases the diversity and complexity of the objects in the Caltech-256 dataset, emphasizing the importance of a varied dataset for training robust object recognition models.

@ -67,7 +67,7 @@ To train a YOLO model on the CIFAR-10 dataset for 100 epochs with an image size

The CIFAR-10 dataset contains color images of various objects, providing a well-structured dataset for image classification tasks. Here are some examples of images from the dataset:

The example showcases the variety and complexity of the objects in the CIFAR-10 dataset, highlighting the importance of a diverse dataset for training robust image classification models.

@ -56,7 +56,7 @@ To train a YOLO model on the CIFAR-100 dataset for 100 epochs with an image size

The CIFAR-100 dataset contains color images of various objects, providing a well-structured dataset for image classification tasks. Here are some examples of images from the dataset:

The example showcases the variety and complexity of the objects in the CIFAR-100 dataset, highlighting the importance of a diverse dataset for training robust image classification models.

@ -81,7 +81,7 @@ To train a CNN model on the Fashion-MNIST dataset for 100 epochs with an image s

The Fashion-MNIST dataset contains grayscale images of Zalando's article images, providing a well-structured dataset for image classification tasks. Here are some examples of images from the dataset:

The example showcases the variety and complexity of the images in the Fashion-MNIST dataset, highlighting the importance of a diverse dataset for training robust image classification models.

@ -66,7 +66,7 @@ To train a deep learning model on the ImageNet dataset for 100 epochs with an im

The ImageNet dataset contains high-resolution images spanning thousands of object categories, providing a diverse and extensive dataset for training and evaluating computer vision models. Here are some examples of images from the dataset:

The example showcases the variety and complexity of the images in the ImageNet dataset, highlighting the importance of a diverse dataset for training robust computer vision models.

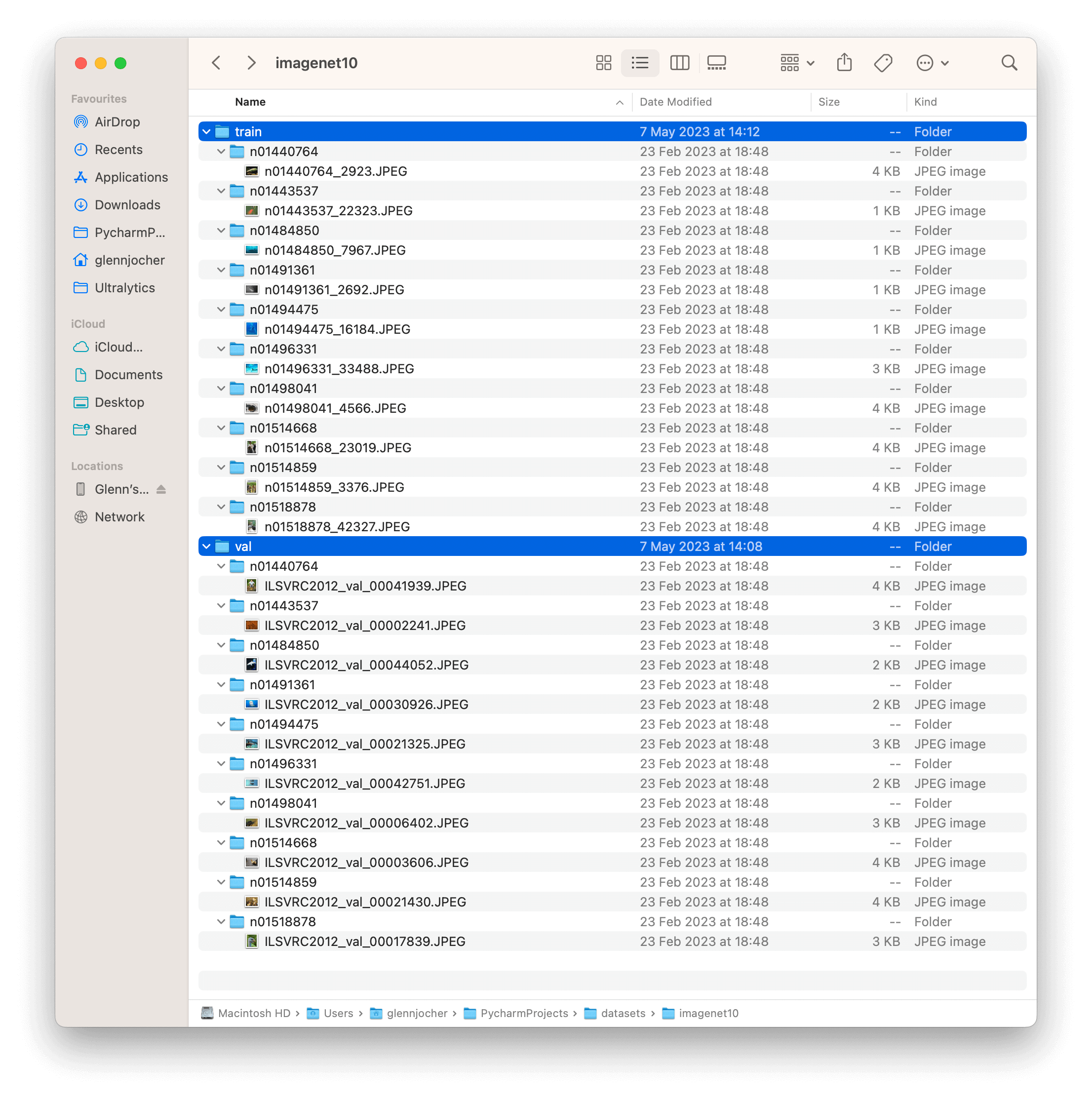

@ -52,7 +52,7 @@ To test a deep learning model on the ImageNet10 dataset with an image size of 22

The ImageNet10 dataset contains a subset of images from the original ImageNet dataset. These images are chosen to represent the first 10 classes in the dataset, providing a diverse yet compact dataset for quick testing and evaluation.

The example showcases the variety and complexity of the images in the ImageNet10 dataset, highlighting its usefulness for sanity checks and quick testing of computer vision models.

The example showcases the variety and complexity of the images in the ImageNet10 dataset, highlighting its usefulness for sanity checks and quick testing of computer vision models.

@ -54,7 +54,7 @@ To train a model on the ImageNette dataset for 100 epochs with a standard image

The ImageNette dataset contains colored images of various objects and scenes, providing a diverse dataset for image classification tasks. Here are some examples of images from the dataset:

The example showcases the variety and complexity of the images in the ImageNette dataset, highlighting the importance of a diverse dataset for training robust image classification models.

@ -89,7 +89,7 @@ It's important to note that using smaller images will likely yield lower perform

The ImageWoof dataset contains colorful images of various dog breeds, providing a challenging dataset for image classification tasks. Here are some examples of images from the dataset:

The example showcases the subtle differences and similarities among the different dog breeds in the ImageWoof dataset, highlighting the complexity and difficulty of the classification task.

@ -91,7 +91,7 @@ To train a YOLOv8n model on the African wildlife dataset for 100 epochs with an

The African wildlife dataset comprises a wide variety of images showcasing diverse animal species and their natural habitats. Below are examples of images from the dataset, each accompanied by its corresponding annotations.

- **Mosaiced Image**: Here, we present a training batch consisting of mosaiced dataset images. Mosaicing, a training technique, combines multiple images into one, enriching batch diversity. This method helps enhance the model's ability to generalize across different object sizes, aspect ratios, and contexts.

@ -70,7 +70,7 @@ To train a YOLOv8n model on the Argoverse dataset for 100 epochs with an image s

The Argoverse dataset contains a diverse set of sensor data, including camera images, LiDAR point clouds, and HD map information, providing rich context for autonomous driving tasks. Here are some examples of data from the dataset, along with their corresponding annotations:

- **Argoverse 3D Tracking**: This image demonstrates an example of 3D object tracking, where objects are annotated with 3D bounding boxes. The dataset provides LiDAR point clouds and camera images to facilitate the development of models for this task.

@ -90,7 +90,7 @@ To train a YOLOv8n model on the brain tumor dataset for 100 epochs with an image

The brain tumor dataset encompasses a wide array of images featuring diverse object categories and intricate scenes. Presented below are examples of images from the dataset, accompanied by their respective annotations

- **Mosaiced Image**: Displayed here is a training batch comprising mosaiced dataset images. Mosaicing, a training technique, consolidates multiple images into one, enhancing batch diversity. This approach aids in improving the model's capacity to generalize across various object sizes, aspect ratios, and contexts.

@ -87,7 +87,7 @@ To train a YOLOv8n model on the COCO dataset for 100 epochs with an image size o

The COCO dataset contains a diverse set of images with various object categories and complex scenes. Here are some examples of images from the dataset, along with their corresponding annotations:

- **Mosaiced Image**: This image demonstrates a training batch composed of mosaiced dataset images. Mosaicing is a technique used during training that combines multiple images into a single image to increase the variety of objects and scenes within each training batch. This helps improve the model's ability to generalize to different object sizes, aspect ratios, and contexts.

- **Mosaiced Image**: This image demonstrates a training batch composed of mosaiced dataset images. Mosaicing is a technique used during training that combines multiple images into a single image to increase the variety of objects and scenes within each training batch. This helps improve the model's ability to generalize to different object sizes, aspect ratios, and contexts.

@ -65,7 +65,7 @@ To train a YOLOv8n model on the Global Wheat Head Dataset for 100 epochs with an

The Global Wheat Head Dataset contains a diverse set of outdoor field images, capturing the natural variability in wheat head appearances, environments, and conditions. Here are some examples of data from the dataset, along with their corresponding annotations:

- **Wheat Head Detection**: This image demonstrates an example of wheat head detection, where wheat heads are annotated with bounding boxes. The dataset provides a variety of images to facilitate the development of models for this task.

Labels for this format should be exported to YOLO format with one `*.txt` file per image. If there are no objects in an image, no `*.txt` file is required. The `*.txt` file should be formatted with one row per object in `class x_center y_center width height` format. Box coordinates must be in **normalized xywh** format (from 0 to 1). If your boxes are in pixels, you should divide `x_center` and `width` by image width, and `y_center` and `height` by image height. Class numbers should be zero-indexed (start with 0).

When using the Ultralytics YOLO format, organize your training and validation images and labels as shown in the [COCO8 dataset](coco8.md) example below.

@ -20,7 +20,7 @@ The [LVIS dataset](https://www.lvisdataset.org/) is a large-scale, fine-grained

</p>

<palign="center">

<imgwidth="640"src="https://github.com/ultralytics/ultralytics/assets/26833433/40230a80-e7bc-4310-a860-4cc0ef4bb02a"alt="LVIS Dataset example images">

<imgwidth="640"src="https://github.com/ultralytics/docs/releases/download/0/lvis-dataset-example-images.avif"alt="LVIS Dataset example images">

</p>

## Key Features

@ -83,7 +83,7 @@ To train a YOLOv8n model on the LVIS dataset for 100 epochs with an image size o

The LVIS dataset contains a diverse set of images with various object categories and complex scenes. Here are some examples of images from the dataset, along with their corresponding annotations:

- **Mosaiced Image**: This image demonstrates a training batch composed of mosaiced dataset images. Mosaicing is a technique used during training that combines multiple images into a single image to increase the variety of objects and scenes within each training batch. This helps improve the model's ability to generalize to different object sizes, aspect ratios, and contexts.

@ -154,6 +154,6 @@ Ultralytics YOLO models, including the latest YOLOv8, are optimized for real-tim

Yes, the LVIS dataset includes a variety of images with diverse object categories and complex scenes. Here is an example of a sample image along with its annotations:

This mosaiced image demonstrates a training batch composed of multiple dataset images combined into one. Mosaicing increases the variety of objects and scenes within each training batch, enhancing the model's ability to generalize across different contexts. For more details on the LVIS dataset, explore the [LVIS dataset documentation](#key-features).

@ -65,7 +65,7 @@ To train a YOLOv8n model on the Objects365 dataset for 100 epochs with an image

The Objects365 dataset contains a diverse set of high-resolution images with objects from 365 categories, providing rich context for object detection tasks. Here are some examples of the images in the dataset:

- **Objects365**: This image demonstrates an example of object detection, where objects are annotated with bounding boxes. The dataset provides a wide range of images to facilitate the development of models for this task.

- **Open Images V7**: This image exemplifies the depth and detail of annotations available, including bounding boxes, relationships, and segmentation masks.

Roboflow 100, developed by [Roboflow](https://roboflow.com/?ref=ultralytics) and sponsored by Intel, is a groundbreaking [object detection](../../tasks/detect.md) benchmark. It includes 100 diverse datasets sampled from over 90,000 public datasets. This benchmark is designed to test the adaptability of models to various domains, including healthcare, aerial imagery, and video games.

@ -104,7 +104,7 @@ You can access it directly from the Roboflow 100 GitHub repository. In addition,

Roboflow 100 consists of datasets with diverse images and videos captured from various angles and domains. Here's a look at examples of annotated images in the RF100 benchmark.

<palign="center">

<imgwidth="640"src="https://blog.roboflow.com/content/images/2022/11/image-2.png"alt="Sample Data and Annotations">

<imgwidth="640"src="https://github.com/ultralytics/docs/releases/download/0/sample-data-annotations.avif"alt="Sample Data and Annotations">

</p>

The diversity in the Roboflow 100 benchmark that can be seen above is a significant advancement from traditional benchmarks which often focus on optimizing a single metric within a limited domain.

@ -79,7 +79,7 @@ To train a YOLOv8n model on the signature detection dataset for 100 epochs with

The signature detection dataset comprises a wide variety of images showcasing different document types and annotated signatures. Below are examples of images from the dataset, each accompanied by its corresponding annotations.

- **Mosaiced Image**: Here, we present a training batch consisting of mosaiced dataset images. Mosaicing, a training technique, combines multiple images into one, enriching batch diversity. This method helps enhance the model's ability to generalize across different signature sizes, aspect ratios, and contexts.

@ -78,7 +78,7 @@ To train a YOLOv8n model on the SKU-110K dataset for 100 epochs with an image si

The SKU-110k dataset contains a diverse set of retail shelf images with densely packed objects, providing rich context for object detection tasks. Here are some examples of data from the dataset, along with their corresponding annotations:

- **Densely packed retail shelf image**: This image demonstrates an example of densely packed objects in a retail shelf setting. Objects are annotated with bounding boxes and SKU category labels.

@ -74,7 +74,7 @@ To train a YOLOv8n model on the VisDrone dataset for 100 epochs with an image si

The VisDrone dataset contains a diverse set of images and videos captured by drone-mounted cameras. Here are some examples of data from the dataset, along with their corresponding annotations:

- **Task 1**: Object detection in images - This image demonstrates an example of object detection in images, where objects are annotated with bounding boxes. The dataset provides a wide variety of images taken from different locations, environments, and densities to facilitate the development of models for this task.

@ -66,7 +66,7 @@ To train a YOLOv8n model on the VOC dataset for 100 epochs with an image size of

The VOC dataset contains a diverse set of images with various object categories and complex scenes. Here are some examples of images from the dataset, along with their corresponding annotations:

- **Mosaiced Image**: This image demonstrates a training batch composed of mosaiced dataset images. Mosaicing is a technique used during training that combines multiple images into a single image to increase the variety of objects and scenes within each training batch. This helps improve the model's ability to generalize to different object sizes, aspect ratios, and contexts.

@ -69,7 +69,7 @@ To train a model on the xView dataset for 100 epochs with an image size of 640,

The xView dataset contains high-resolution satellite images with a diverse set of objects annotated using bounding boxes. Here are some examples of data from the dataset, along with their corresponding annotations:

- **Overhead Imagery**: This image demonstrates an example of object detection in overhead imagery, where objects are annotated with bounding boxes. The dataset provides high-resolution satellite images to facilitate the development of models for this task.

@ -146,17 +146,19 @@ The xView dataset comprises high-resolution satellite images collected from Worl

If you utilize the xView dataset in your research, please cite the following paper:

!!! Quote "BibTeX"

```bibtex

@misc{lam2018xview,

title={xView: Objects in Context in Overhead Imagery},

author={Darius Lam and Richard Kuzma and Kevin McGee and Samuel Dooley and Michael Laielli and Matthew Klaric and Yaroslav Bulatov and Brendan McCord},

year={2018},

eprint={1802.07856},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

```

!!! Quote ""

=== "BibTeX"

```bibtex

@misc{lam2018xview,

title={xView: Objects in Context in Overhead Imagery},

author={Darius Lam and Richard Kuzma and Kevin McGee and Samuel Dooley and Michael Laielli and Matthew Klaric and Yaroslav Bulatov and Brendan McCord},

year={2018},

eprint={1802.07856},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

```

For more information about the xView dataset, visit the official [xView dataset website](http://xviewdataset.org/).

Explorer GUI is like a playground build using [Ultralytics Explorer API](api.md). It allows you to run semantic/vector similarity search, SQL queries and even search using natural language using our ask AI feature powered by LLMs.

This allows you to write how you want to filter your dataset using natural language. You don't have to be proficient in writing SQL queries. Our AI powered query generator will automatically do that under the hood. For example - you can say - "show me 100 images with exactly one person and 2 dogs. There can be other objects too" and it'll internally generate the query and show you those results. Here's an example output when asked to "Show 10 images with exactly 5 persons" and you'll see a result like this:

This is a Demo build using the Explorer API. You can use the API to build your own exploratory notebooks or scripts to get insights into your datasets. Learn more about the Explorer API [here](api.md).

<imgwidth="1709"alt="Ultralytics Explorer Screenshot 1"src="https://github.com/ultralytics/ultralytics/assets/15766192/feb1fe05-58c5-4173-a9ff-e611e3bba3d0">

<imgwidth="1709"alt="Ultralytics Explorer Screenshot 1"src="https://github.com/ultralytics/docs/releases/download/0/explorer-dashboard-screenshot-1.avif">

</p>

<ahref="https://colab.research.google.com/github/ultralytics/ultralytics/blob/main/docs/en/datasets/explorer/explorer.ipynb"><imgsrc="https://colab.research.google.com/assets/colab-badge.svg"alt="Open In Colab"></a>

@ -56,7 +56,7 @@ yolo explorer

You can set it like this - `yolo settings openai_api_key="..."`

<p>

<imgwidth="1709"alt="Ultralytics Explorer OpenAI Integration"src="https://github.com/AyushExel/assets/assets/15766192/1b5f3708-be3e-44c5-9ea3-adcd522dfc75">

<imgwidth="1709"alt="Ultralytics Explorer OpenAI Integration"src="https://github.com/ultralytics/docs/releases/download/0/ultralytics-explorer-openai-integration.avif">

@ -24,7 +24,7 @@ Ultralytics provides support for various datasets to facilitate computer vision

Create embeddings for your dataset, search for similar images, run SQL queries, perform semantic search and even search using natural language! You can get started with our GUI app or build your own using the API. Learn more [here](explorer/index.md).

<p>

<imgalt="Ultralytics Explorer Screenshot"src="https://github.com/RizwanMunawar/RizwanMunawar/assets/62513924/d2ebaffd-e065-4d88-983a-33cb6f593785">

<imgalt="Ultralytics Explorer Screenshot"src="https://github.com/ultralytics/docs/releases/download/0/ultralytics-explorer-screenshot.avif">

</p>

- Try the [GUI Demo](explorer/index.md)

@ -38,6 +38,7 @@ Bounding box object detection is a computer vision technique that involves detec

- [COCO](detect/coco.md): Common Objects in Context (COCO) is a large-scale object detection, segmentation, and captioning dataset with 80 object categories.

- [LVIS](detect/lvis.md): A large-scale object detection, segmentation, and captioning dataset with 1203 object categories.

- [COCO8](detect/coco8.md): A smaller subset of the first 4 images from COCO train and COCO val, suitable for quick tests.

- [COCO128](detect/coco.md): A smaller subset of the first 128 images from COCO train and COCO val, suitable for tests.

- [Global Wheat 2020](detect/globalwheat2020.md): A dataset containing images of wheat heads for the Global Wheat Challenge 2020.

- [Objects365](detect/objects365.md): A high-quality, large-scale dataset for object detection with 365 object categories and over 600K annotated images.

- [OpenImagesV7](detect/open-images-v7.md): A comprehensive dataset by Google with 1.7M train images and 42k validation images.

@ -56,6 +57,7 @@ Instance segmentation is a computer vision technique that involves identifying a

- [COCO](segment/coco.md): A large-scale dataset designed for object detection, segmentation, and captioning tasks with over 200K labeled images.

- [COCO8-seg](segment/coco8-seg.md): A smaller dataset for instance segmentation tasks, containing a subset of 8 COCO images with segmentation annotations.

- [COCO128-seg](segment/coco.md): A smaller dataset for instance segmentation tasks, containing a subset of 128 COCO images with segmentation annotations.

- [Crack-seg](segment/crack-seg.md): Specifically crafted dataset for detecting cracks on roads and walls, applicable for both object detection and segmentation tasks.

- [Package-seg](segment/package-seg.md): Tailored dataset for identifying packages in warehouses or industrial settings, suitable for both object detection and segmentation applications.

- [Carparts-seg](segment/carparts-seg.md): Purpose-built dataset for identifying vehicle parts, catering to design, manufacturing, and research needs. It serves for both object detection and segmentation tasks.

@ -88,6 +90,7 @@ Image classification is a computer vision task that involves categorizing an ima

Oriented Bounding Boxes (OBB) is a method in computer vision for detecting angled objects in images using rotated bounding boxes, often applied to aerial and satellite imagery.

- [DOTA-v2](obb/dota-v2.md): A popular OBB aerial imagery dataset with 1.7 million instances and 11,268 images.

- [DOTA8](obb/dota8.md): A smaller subset of the first 8 images from the DOTAv1 split set, 4 for training and 4 for validation, suitable for quick tests.

[DOTA](https://captain-whu.github.io/DOTA/index.html) stands as a specialized dataset, emphasizing object detection in aerial images. Originating from the DOTA series of datasets, it offers annotated images capturing a diverse array of aerial scenes with Oriented Bounding Boxes (OBB).

- **DOTA examples**: This snapshot underlines the complexity of aerial scenes and the significance of Oriented Bounding Box annotations, capturing objects in their natural orientation.

- **Mosaiced Image**: This image demonstrates a training batch composed of mosaiced dataset images. Mosaicing is a technique used during training that combines multiple images into a single image to increase the variety of objects and scenes within each training batch. This helps improve the model's ability to generalize to different object sizes, aspect ratios, and contexts.

Internally, YOLO processes losses and outputs in the `xywhr` format, which represents the bounding box's center point (xy), width, height, and rotation.

<palign="center"><imgwidth="800"src="https://user-images.githubusercontent.com/26833433/259471881-59020fe2-09a4-4dcc-acce-9b0f7cfa40ee.png"alt="OBB format examples"></p>

<palign="center"><imgwidth="800"src="https://github.com/ultralytics/docs/releases/download/0/obb-format-examples.avif"alt="OBB format examples"></p>

An example of a `*.txt` label file for the above image, which contains an object of class `0` in OBB format, could look like:

The [COCO-Pose](https://cocodataset.org/#keypoints-2017) dataset is a specialized version of the COCO (Common Objects in Context) dataset, designed for pose estimation tasks. It leverages the COCO Keypoints 2017 images and labels to enable the training of models like YOLO for pose estimation tasks.

@ -78,7 +78,7 @@ To train a YOLOv8n-pose model on the COCO-Pose dataset for 100 epochs with an im

The COCO-Pose dataset contains a diverse set of images with human figures annotated with keypoints. Here are some examples of images from the dataset, along with their corresponding annotations:

- **Mosaiced Image**: This image demonstrates a training batch composed of mosaiced dataset images. Mosaicing is a technique used during training that combines multiple images into a single image to increase the variety of objects and scenes within each training batch. This helps improve the model's ability to generalize to different object sizes, aspect ratios, and contexts.

- **Mosaiced Image**: This image demonstrates a training batch composed of mosaiced dataset images. Mosaicing is a technique used during training that combines multiple images into a single image to increase the variety of objects and scenes within each training batch. This helps improve the model's ability to generalize to different object sizes, aspect ratios, and contexts.

- **Mosaiced Image**: This image demonstrates a training batch composed of mosaiced dataset images. Mosaicing is a technique used during training that combines multiple images into a single image to increase the variety of objects and scenes within each training batch. This helps improve the model's ability to generalize to different object sizes, aspect ratios, and contexts.

@ -72,7 +72,7 @@ To train Ultralytics YOLOv8n model on the Carparts Segmentation dataset for 100

The Carparts Segmentation dataset includes a diverse array of images and videos taken from various perspectives. Below, you'll find examples of data from the dataset along with their corresponding annotations:

- This image illustrates object segmentation within a sample, featuring annotated bounding boxes with masks surrounding identified objects. The dataset consists of a varied set of images captured in various locations, environments, and densities, serving as a comprehensive resource for crafting models specific to this task.

- This instance highlights the diversity and complexity inherent in the dataset, emphasizing the crucial role of high-quality data in computer vision tasks, particularly in the realm of car parts segmentation.

@ -76,7 +76,7 @@ To train a YOLOv8n-seg model on the COCO-Seg dataset for 100 epochs with an imag

COCO-Seg, like its predecessor COCO, contains a diverse set of images with various object categories and complex scenes. However, COCO-Seg introduces more detailed instance segmentation masks for each object in the images. Here are some examples of images from the dataset, along with their corresponding instance segmentation masks:

- **Mosaiced Image**: This image demonstrates a training batch composed of mosaiced dataset images. Mosaicing is a technique used during training that combines multiple images into a single image to increase the variety of objects and scenes within each training batch. This aids the model's ability to generalize to different object sizes, aspect ratios, and contexts.

- **Mosaiced Image**: This image demonstrates a training batch composed of mosaiced dataset images. Mosaicing is a technique used during training that combines multiple images into a single image to increase the variety of objects and scenes within each training batch. This helps improve the model's ability to generalize to different object sizes, aspect ratios, and contexts.

@ -61,7 +61,7 @@ To train Ultralytics YOLOv8n model on the Crack Segmentation dataset for 100 epo

The Crack Segmentation dataset comprises a varied collection of images and videos captured from multiple perspectives. Below are instances of data from the dataset, accompanied by their respective annotations:

- This image presents an example of image object segmentation, featuring annotated bounding boxes with masks outlining identified objects. The dataset includes a diverse array of images taken in different locations, environments, and densities, making it a comprehensive resource for developing models designed for this particular task.

@ -93,6 +93,7 @@ The `train` and `val` fields specify the paths to the directories containing the

- [COCO](coco.md): A comprehensive dataset for object detection, segmentation, and captioning, featuring over 200K labeled images across a wide range of categories.

- [COCO8-seg](coco8-seg.md): A compact, 8-image subset of COCO designed for quick testing of segmentation model training, ideal for CI checks and workflow validation in the `ultralytics` repository.

- [COCO128-seg](coco.md): A smaller dataset for instance segmentation tasks, containing a subset of 128 COCO images with segmentation annotations.

- [Carparts-seg](carparts-seg.md): A specialized dataset focused on the segmentation of car parts, ideal for automotive applications. It includes a variety of vehicles with detailed annotations of individual car components.

- [Crack-seg](crack-seg.md): A dataset tailored for the segmentation of cracks in various surfaces. Essential for infrastructure maintenance and quality control, it provides detailed imagery for training models to identify structural weaknesses.

- [Package-seg](package-seg.md): A dataset dedicated to the segmentation of different types of packaging materials and shapes. It's particularly useful for logistics and warehouse automation, aiding in the development of systems for package handling and sorting.

@ -61,7 +61,7 @@ To train Ultralytics YOLOv8n model on the Package Segmentation dataset for 100 e

The Package Segmentation dataset comprises a varied collection of images and videos captured from multiple perspectives. Below are instances of data from the dataset, accompanied by their respective annotations:

- This image displays an instance of image object detection, featuring annotated bounding boxes with masks outlining recognized objects. The dataset incorporates a diverse collection of images taken in different locations, environments, and densities. It serves as a comprehensive resource for developing models specific to this task.

- The example emphasizes the diversity and complexity present in the VisDrone dataset, underscoring the significance of high-quality sensor data for computer vision tasks involving drones.

This guide provides a comprehensive introduction to setting up a Conda environment for your Ultralytics projects. Conda is an open-source package and environment management system that offers an excellent alternative to pip for installing packages and dependencies. Its isolated environments make it particularly well-suited for data science and machine learning endeavors. For more details, visit the Ultralytics Conda package on [Anaconda](https://anaconda.org/conda-forge/ultralytics) and check out the Ultralytics feedstock repository for package updates on [GitHub](https://github.com/conda-forge/ultralytics-feedstock/).

# Coral Edge TPU on a Raspberry Pi with Ultralytics YOLOv8 🚀

<palign="center">

<imgwidth="800"src="https://images.ctfassets.net/2lpsze4g694w/5XK2dV0w55U0TefijPli1H/bf0d119d77faef9a5d2cc0dad2aa4b42/Edge-TPU-USB-Accelerator-and-Pi.jpg?w=800"alt="Raspberry Pi single board computer with USB Edge TPU accelerator">

<imgwidth="800"src="https://github.com/ultralytics/docs/releases/download/0/edge-tpu-usb-accelerator-and-pi.avif"alt="Raspberry Pi single board computer with USB Edge TPU accelerator">

@ -62,7 +62,7 @@ Depending on the specific requirements of a [computer vision task](../tasks/inde

- **Keypoints**: Specific points marked within an image to identify locations of interest. Keypoints are used in tasks like pose estimation and facial landmark detection.

<palign="center">

<imgwidth="100%"src="https://labelyourdata.com/img/article-illustrations/types_of_da_light.jpg"alt="Types of Data Annotation">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/types-of-data-annotation.avif"alt="Types of Data Annotation">

</p>

### Common Annotation Formats

@ -91,7 +91,7 @@ Let's say you are ready to annotate now. There are several open-source tools ava

- **[Labelme](https://github.com/labelmeai/labelme)**: A simple and easy-to-use tool that allows for quick annotation of images with polygons, making it ideal for straightforward tasks.

These open-source tools are budget-friendly and provide a range of features to meet different annotation needs.

@ -105,7 +105,7 @@ Before you dive into annotating your data, there are a few more things to keep i

It's important to understand the difference between accuracy and precision and how it relates to annotation. Accuracy refers to how close the annotated data is to the true values. It helps us measure how closely the labels reflect real-world scenarios. Precision indicates the consistency of annotations. It checks if you are giving the same label to the same object or feature throughout the dataset. High accuracy and precision lead to better-trained models by reducing noise and improving the model's ability to generalize from the training data.

<palign="center">

<imgwidth="100%"src="https://keylabs.ai/blog/content/images/size/w1600/2023/12/new26-3.jpg"alt="Example of Precision">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/example-of-precision.avif"alt="Example of Precision">

@ -8,7 +8,7 @@ keywords: Ultralytics, YOLOv8, NVIDIA Jetson, JetPack, AI deployment, embedded s

This comprehensive guide provides a detailed walkthrough for deploying Ultralytics YOLOv8 on [NVIDIA Jetson](https://www.nvidia.com/en-us/autonomous-machines/embedded-systems/) devices using DeepStream SDK and TensorRT. Here we use TensorRT to maximize the inference performance on the Jetson platform.

<imgwidth="1024"src="https://github.com/ultralytics/ultralytics/assets/20147381/67403d6c-e10c-439a-a731-f1478c0656c8"alt="DeepStream on NVIDIA Jetson">

<imgwidth="1024"src="https://github.com/ultralytics/docs/releases/download/0/deepstream-nvidia-jetson.avif"alt="DeepStream on NVIDIA Jetson">

It will take a long time to generate the TensorRT engine file before starting the inference. So please be patient.

<divalign=center><imgwidth=1000src="https://github.com/ultralytics/ultralytics/assets/20147381/61bd7710-d009-4ca6-9536-2575f3eaec4a"alt="YOLOv8 with deepstream"></div>

<divalign=center><imgwidth=1000src="https://github.com/ultralytics/docs/releases/download/0/yolov8-with-deepstream.avif"alt="YOLOv8 with deepstream"></div>

!!! Tip

@ -288,7 +288,7 @@ To set up multiple streams under a single deepstream application, you can do the

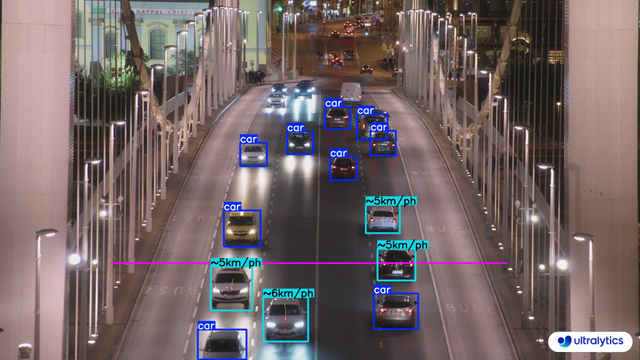

Consider a computer vision project where you want to [estimate the speed of vehicles](./speed-estimation.md) on a highway. The core issue is that current speed monitoring methods are inefficient and error-prone due to outdated radar systems and manual processes. The project aims to develop a real-time computer vision system that can replace legacy [speed estimation](https://www.ultralytics.com/blog/ultralytics-yolov8-for-speed-estimation-in-computer-vision-projects) systems.

<palign="center">

<imgwidth="100%"src="https://assets-global.website-files.com/6479eab6eb2ed5e597810e9e/664efc6e1c4bef6407824558_Abi%20Speed%20fig1.png"alt="Speed Estimation Using YOLOv8">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/speed-estimation-using-yolov8.avif"alt="Speed Estimation Using YOLOv8">

</p>

Primary users include traffic management authorities and law enforcement, while secondary stakeholders are highway planners and the public benefiting from safer roads. Key requirements involve evaluating budget, time, and personnel, as well as addressing technical needs like high-resolution cameras and real-time data processing. Additionally, regulatory constraints on privacy and data security must be considered.

@ -53,7 +53,7 @@ Your problem statement helps you conceptualize which computer vision task can so

For example, if your problem is monitoring vehicle speeds on a highway, the relevant computer vision task is object tracking. [Object tracking](../modes/track.md) is suitable because it allows the system to continuously follow each vehicle in the video feed, which is crucial for accurately calculating their speeds.

<palign="center">

<imgwidth="100%"src="https://assets-global.website-files.com/6479eab6eb2ed5e597810e9e/664f03ba300cf6e61689862f_FIG%20444.gif"alt="Example of Object Tracking">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/example-of-object-tracking.avif"alt="Example of Object Tracking">

</p>

Other tasks, like [object detection](../tasks/detect.md), are not suitable as they don't provide continuous location or movement information. Once you've identified the appropriate computer vision task, it guides several critical aspects of your project, like model selection, dataset preparation, and model training approaches.

@ -82,7 +82,7 @@ Next, let's look at a few common discussion points in the community regarding co

The most popular computer vision tasks include image classification, object detection, and image segmentation.

<palign="center">

<imgwidth="100%"src="https://assets-global.website-files.com/614c82ed388d53640613982e/64aeb16e742bde3dc050e048_image%20classification%20vs%20object%20detection%20vs%20image%20segmentation.webp"alt="Overview of Computer Vision Tasks">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/image-classification-vs-object-detection-vs-image-segmentation.avif"alt="Overview of Computer Vision Tasks">

</p>

For a detailed explanation of various tasks, please take a look at the Ultralytics Docs page on [YOLOv8 Tasks](../tasks/index.md).

@ -92,7 +92,7 @@ For a detailed explanation of various tasks, please take a look at the Ultralyti

No, pre-trained models don't "remember" classes in the traditional sense. They learn patterns from massive datasets, and during custom training (fine-tuning), these patterns are adjusted for your specific task. The model's capacity is limited, and focusing on new information can overwrite some previous learnings.

<palign="center">

<imgwidth="100%"src="https://media.licdn.com/dms/image/D4D12AQHIJdbNXjBXEQ/article-cover_image-shrink_720_1280/0/1692158503859?e=2147483647&v=beta&t=pib5jFzINB9RzKIATGHMsE0jK1_4_m5LRqx7GkYiFqA"alt="Overview of Transfer Learning">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/overview-of-transfer-learning.avif"alt="Overview of Transfer Learning">

</p>

If you want to use the classes the model was pre-trained on, a practical approach is to use two models: one retains the original performance, and the other is fine-tuned for your specific task. This way, you can combine the outputs of both models. There are other options like freezing layers, using the pre-trained model as a feature extractor, and task-specific branching, but these are more complex solutions and require more expertise.

@ -79,6 +79,10 @@ Measuring the gap between two objects is known as distance calculation within a

- Mouse Right Click will delete all drawn points

- Mouse Left Click can be used to draw points

???+ warning "Distance is Estimate"

Distance will be an estimate and may not be fully accurate, as it is calculated using 2-dimensional data, which lacks information about the object's depth.

This guide serves as a comprehensive introduction to setting up a Docker environment for your Ultralytics projects. [Docker](https://docker.com/) is a platform for developing, shipping, and running applications in containers. It is particularly beneficial for ensuring that the software will always run the same, regardless of where it's deployed. For more details, visit the Ultralytics Docker repository on [Docker Hub](https://hub.docker.com/r/ultralytics/ultralytics).

For a full list of augmentation hyperparameters used in YOLOv8 please refer to the [configurations page](../usage/cfg.md#augmentation-settings).

@ -157,7 +157,7 @@ This is a plot displaying fitness (typically a performance metric like AP50) aga

- **Usage**: Performance visualization

<palign="center">

<imgwidth="640"src="https://user-images.githubusercontent.com/26833433/266847423-9d0aea13-d5c4-4771-b06e-0b817a498260.png"alt="Hyperparameter Tuning Fitness vs Iteration">

<imgwidth="640"src="https://github.com/ultralytics/docs/releases/download/0/best-fitness.avif"alt="Hyperparameter Tuning Fitness vs Iteration">

</p>

#### tune_results.csv

@ -182,7 +182,7 @@ This file contains scatter plots generated from `tune_results.csv`, helping you

After performing the [Segment Task](../tasks/segment.md), it's sometimes desirable to extract the isolated objects from the inference results. This guide provides a generic recipe on how to accomplish this using the Ultralytics [Predict Mode](../modes/predict.md).

@ -162,7 +162,7 @@ After performing the [Segment Task](../tasks/segment.md), it's sometimes desirab

There are no additional steps required if keeping full size image.

<figuremarkdown>

{ width=240 }

{ width=240 }

<figcaption>Example full-size output</figcaption>

</figure>

@ -170,7 +170,7 @@ After performing the [Segment Task](../tasks/segment.md), it's sometimes desirab

Additional steps required to crop image to only include object region.

{ align="right" }

{ align="right" }

@ -208,7 +208,7 @@ After performing the [Segment Task](../tasks/segment.md), it's sometimes desirab

There are no additional steps required if keeping full size image.

<figuremarkdown>

{ width=240 }

{ width=240 }

This comprehensive guide illustrates the implementation of K-Fold Cross Validation for object detection datasets within the Ultralytics ecosystem. We'll leverage the YOLO detection format and key Python libraries such as sklearn, pandas, and PyYaml to guide you through the necessary setup, the process of generating feature vectors, and the execution of a K-Fold dataset split.

Whether your project involves the Fruit Detection dataset or a custom data source, this tutorial aims to help you comprehend and apply K-Fold Cross Validation to bolster the reliability and robustness of your machine learning models. While we're applying `k=5` folds for this tutorial, keep in mind that the optimal number of folds can vary depending on your dataset and the specifics of your project.

@ -49,7 +49,7 @@ Optimizing your computer vision model helps it runs efficiently, especially when

Pruning reduces the size of the model by removing weights that contribute little to the final output. It makes the model smaller and faster without significantly affecting accuracy. Pruning involves identifying and eliminating unnecessary parameters, resulting in a lighter model that requires less computational power. It is particularly useful for deploying models on devices with limited resources.

@ -65,7 +65,7 @@ Quantization converts the model's weights and activations from high precision (l

Knowledge distillation involves training a smaller, simpler model (the student) to mimic the outputs of a larger, more complex model (the teacher). The student model learns to approximate the teacher's predictions, resulting in a compact model that retains much of the teacher's accuracy. This technique is beneficial for creating efficient models suitable for deployment on edge devices with constrained resources.

@ -27,7 +27,7 @@ _Quick Tip:_ When running inferences, if you aren't seeing any predictions and y

Intersection over Union (IoU) is a metric in object detection that measures how well the predicted bounding box overlaps with the ground truth bounding box. IoU values range from 0 to 1, where one stands for a perfect match. IoU is essential because it measures how closely the predicted boundaries match the actual object boundaries.

<palign="center">

<imgwidth="100%"src="https://learnopencv.com/wp-content/uploads/2022/12/feature-image-iou-1.jpg"alt="Intersection over Union Overview">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/intersection-over-union-overview.avif"alt="Intersection over Union Overview">

</p>

### Mean Average Precision

@ -42,7 +42,7 @@ Let's focus on two specific mAP metrics:

Other mAP metrics include mAP@0.75, which uses a stricter IoU threshold of 0.75, and mAP@small, medium, and large, which evaluate precision across objects of different sizes.

<palign="center">

<imgwidth="100%"src="https://a.storyblok.com/f/139616/1200x800/913f78e511/ways-to-improve-mean-average-precision.webp"alt="Mean Average Precision Overview">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/mean-average-precision-overview.avif"alt="Mean Average Precision Overview">

@ -40,7 +40,7 @@ You can use automated monitoring tools to make it easier to monitor models after

The three tools introduced above, Evidently AI, Prometheus, and Grafana, can work together seamlessly as a fully open-source ML monitoring solution that is ready for production. Evidently AI is used to collect and calculate metrics, Prometheus stores these metrics, and Grafana displays them and sets up alerts. While there are many other tools available, this setup is an exciting open-source option that provides robust capabilities for monitoring and maintaining your models.

<palign="center">

<imgwidth="100%"src="https://cdn.prod.website-files.com/660ef16a9e0687d9cc27474a/6625e0d5fe28fe414563ad0d_64498c4145adad5ecd2bfdcb_5_evidently_grafana_-min.png"alt="Overview of Open Source Model Monitoring Tools">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/evidently-prometheus-grafana-monitoring-tools.avif"alt="Overview of Open Source Model Monitoring Tools">

</p>

### Anomaly Detection and Alert Systems

@ -62,7 +62,7 @@ When you are setting up your alert systems, keep these best practices in mind:

Data drift detection is a concept that helps identify when the statistical properties of the input data change over time, which can degrade model performance. Before you decide to retrain or adjust your models, this technique helps spot that there is an issue. Data drift deals with changes in the overall data landscape over time, while anomaly detection focuses on identifying rare or unexpected data points that may require immediate attention.

@ -82,7 +82,7 @@ Model maintenance is crucial to keep computer vision models accurate and relevan

Once a model is deployed, while monitoring, you may notice changes in data patterns or performance, indicating model drift. Regular updates and re-training become essential parts of model maintenance to ensure the model can handle new patterns and scenarios. There are a few techniques you can use based on how your data is changing.

<palign="center">

<imgwidth="100%"src="https://f8federal.com/wp-content/uploads/2021/06/Asset-2@5x.png"alt="Computer Vision Model Drift Overview">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/computer-vision-model-drift-overview.avif"alt="Computer Vision Model Drift Overview">

</p>

For example, if the data is changing gradually over time, incremental learning is a good approach. Incremental learning involves updating the model with new data without completely retraining it from scratch, saving computational resources and time. However, if the data has changed drastically, a periodic full re-training might be a better option to ensure the model does not overfit on the new data while losing track of older patterns.

@ -94,7 +94,7 @@ Regardless of the method, validation and testing are a must after updates. It is

The frequency of retraining your computer vision model depends on data changes and model performance. Retrain your model whenever you observe a significant performance drop or detect data drift. Regular evaluations can help determine the right retraining schedule by testing the model against new data. Monitoring performance metrics and data patterns lets you decide if your model needs more frequent updates to maintain accuracy.

<palign="center">

<imgwidth="100%"src="https://cdn.prod.website-files.com/660ef16a9e0687d9cc27474a/6625e0e2ce5af6ba15764bf6_62e1b89973a9fd20eb9cde71_blog_retrain_or_not_-20.png"alt="When to Retrain Overview">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/when-to-retrain-overview.avif"alt="When to Retrain Overview">

@ -88,7 +88,7 @@ Underfitting occurs when your model can't capture the underlying patterns in the

The key is to find a balance between overfitting and underfitting. Ideally, a model should perform well on both training and validation datasets. Regularly monitoring your model's performance through metrics and visual inspections, along with applying the right strategies, can help you achieve the best results.

<palign="center">

<imgwidth="100%"src="https://viso.ai/wp-content/uploads/2022/07/overfitting-underfitting-appropriate-fitting.jpg"alt="Overfitting and Underfitting Overview">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/overfitting-underfitting-appropriate-fitting.avif"alt="Overfitting and Underfitting Overview">

</p>

## Data Leakage in Computer Vision and How to Avoid It

@ -19,7 +19,7 @@ A computer vision model is trained by adjusting its internal parameters to minim

During training, the model iteratively makes predictions, calculates errors, and updates its parameters through a process called backpropagation. In this process, the model adjusts its internal parameters (weights and biases) to reduce the errors. By repeating this cycle many times, the model gradually improves its accuracy. Over time, it learns to recognize complex patterns such as shapes, colors, and textures.

<palign="center">

<imgwidth="100%"src="https://editor.analyticsvidhya.com/uploads/18870backprop2.png"alt="What is Backpropagation?">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/backpropagation-diagram.avif"alt="What is Backpropagation?">

</p>

This learning process makes it possible for the computer vision model to perform various [tasks](../tasks/index.md), including [object detection](../tasks/detect.md), [instance segmentation](../tasks/segment.md), and [image classification](../tasks/classify.md). The ultimate goal is to create a model that can generalize its learning to new, unseen images so that it can accurately understand visual data in real-world applications.

@ -64,7 +64,7 @@ Caching can be controlled when training YOLOv8 using the `cache` parameter:

Mixed precision training uses both 16-bit (FP16) and 32-bit (FP32) floating-point types. The strengths of both FP16 and FP32 are leveraged by using FP16 for faster computation and FP32 to maintain precision where needed. Most of the neural network's operations are done in FP16 to benefit from faster computation and lower memory usage. However, a master copy of the model's weights is kept in FP32 to ensure accuracy during the weight update steps. You can handle larger models or larger batch sizes within the same hardware constraints.

<palign="center">

<imgwidth="100%"src="https://miro.medium.com/v2/resize:fit:1400/format:webp/1*htZ4PF2fZ0ttJ5HdsIaAbQ.png"alt="Mixed Precision Training Overview">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/mixed-precision-training-overview.avif"alt="Mixed Precision Training Overview">

</p>

To implement mixed precision training, you'll need to modify your training scripts and ensure your hardware (like GPUs) supports it. Many modern deep learning frameworks, such as Tensorflow, offer built-in support for mixed precision.

@ -99,7 +99,7 @@ Early stopping is a valuable technique for optimizing model training. By monitor

The process involves setting a patience parameter that determines how many epochs to wait for an improvement in validation metrics before stopping training. If the model's performance does not improve within these epochs, training is stopped to avoid wasting time and resources.

For YOLOv8, you can enable early stopping by setting the patience parameter in your training configuration. For example, `patience=5` means training will stop if there's no improvement in validation metrics for 5 consecutive epochs. Using this method ensures the training process remains efficient and achieves optimal performance without excessive computation.

@ -287,7 +287,7 @@ YOLOv8 benchmarks were run by the Ultralytics team on 10 different model formats

Even though all model exports are working with NVIDIA Jetson, we have only included **PyTorch, TorchScript, TensorRT** for the comparison chart below because, they make use of the GPU on the Jetson and are guaranteed to produce the best results. All the other exports only utilize the CPU and the performance is not as good as the above three. You can find benchmarks for all exports in the section after this chart.

|  |  |

| Conveyor Belt Packets Counting Using Ultralytics YOLOv8 | Fish Counting in Sea using Ultralytics YOLOv8 |

|  |  |

| Conveyor Belt Packets Counting Using Ultralytics YOLOv8 | Fish Counting in Sea using Ultralytics YOLOv8 |

!!! Example "Object Counting using YOLOv8 Example"

|  |

| Suitcases Cropping at airport conveyor belt using Ultralytics YOLOv8 |

|  |

| Suitcases Cropping at airport conveyor belt using Ultralytics YOLOv8 |

!!! Example "Object Cropping using YOLOv8 Example"

@ -73,7 +73,7 @@ Here are some other benefits of data augmentation:

Common augmentation techniques include flipping, rotation, scaling, and color adjustments. Several libraries, such as Albumentations, Imgaug, and TensorFlow's ImageDataGenerator, can generate these augmentations.

<palign="center">

<imgwidth="100%"src="https://i0.wp.com/ubiai.tools/wp-content/uploads/2023/11/UKwFg.jpg?fit=2204%2C775&ssl=1"alt="Overview of Data Augmentations">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/overview-of-data-augmentations.avif"alt="Overview of Data Augmentations">

</p>

With respect to YOLOv8, you can [augment your custom dataset](../modes/train.md) by modifying the dataset configuration file, a .yaml file. In this file, you can add an augmentation section with parameters that specify how you want to augment your data.

@ -123,7 +123,7 @@ Common tools for visualizations include:

For a more advanced approach to EDA, you can use the Ultralytics Explorer tool. It offers robust capabilities for exploring computer vision datasets. By supporting semantic search, SQL queries, and vector similarity search, the tool makes it easy to analyze and understand your data. With Ultralytics Explorer, you can create embeddings for your dataset to find similar images, run SQL queries for detailed analysis, and perform semantic searches, all through a user-friendly graphical interface.

<palign="center">

<imgwidth="100%"src="https://github.com/AyushExel/assets/assets/15766192/1b5f3708-be3e-44c5-9ea3-adcd522dfc75"alt="Overview of Ultralytics Explorer">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/ultralytics-explorer-openai-integration.avif"alt="Overview of Ultralytics Explorer">

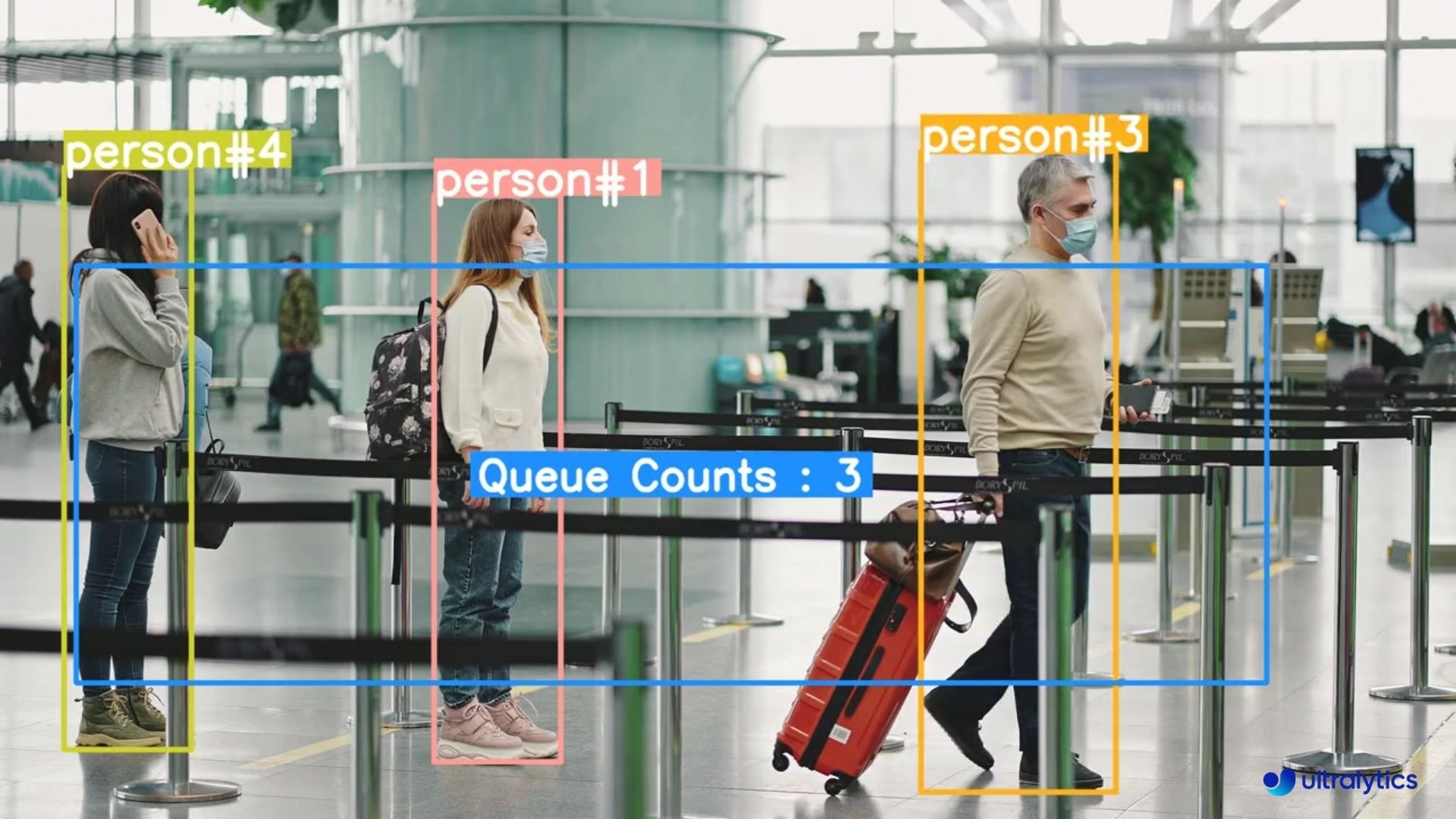

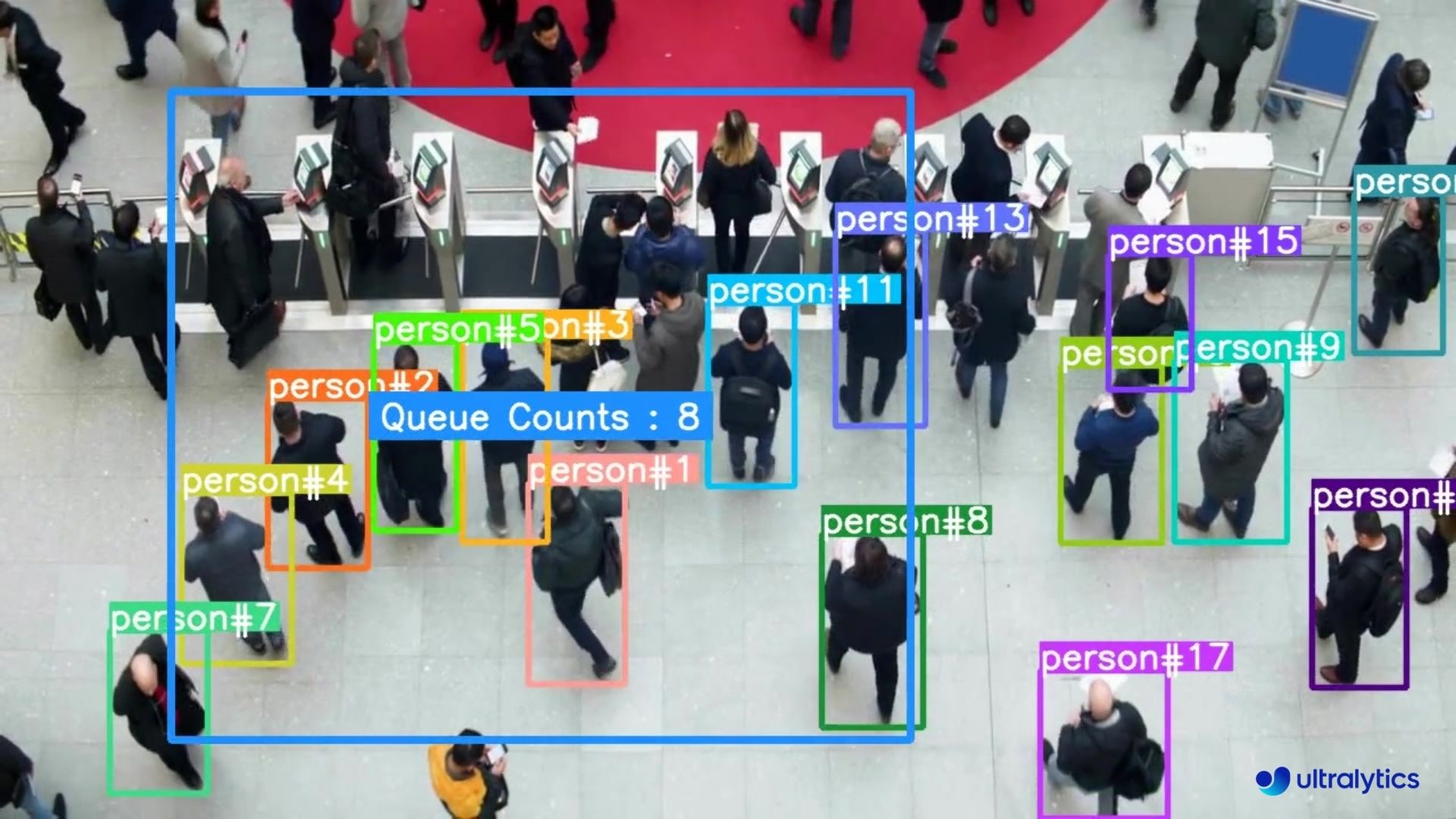

|  |  |

| Queue management at airport ticket counter Using Ultralytics YOLOv8 | Queue monitoring in crowd Ultralytics YOLOv8 |

|  |  |

| Queue management at airport ticket counter Using Ultralytics YOLOv8| Queue monitoring in crowd Ultralytics YOLOv8 |

!!! Example "Queue Management using YOLOv8 Example"

|  |  |

| People Counting in Different Region using Ultralytics YOLOv8 | Crowd Counting in Different Region using Ultralytics YOLOv8 |

|  |  |

| People Counting in Different Region using Ultralytics YOLOv8 | Crowd Counting in Different Region using Ultralytics YOLOv8 |

@ -48,7 +48,7 @@ In ROS, communication between nodes is facilitated through [messages](https://wi

This guide has been tested using [this ROS environment](https://github.com/ambitious-octopus/rosbot_ros/tree/noetic), which is a fork of the [ROSbot ROS repository](https://github.com/husarion/rosbot_ros). This environment includes the Ultralytics YOLO package, a Docker container for easy setup, comprehensive ROS packages, and Gazebo worlds for rapid testing. It is designed to work with the [Husarion ROSbot 2 PRO](https://husarion.com/manuals/rosbot/). The code examples provided will work in any ROS Noetic/Melodic environment, including both simulation and real-world.

@ -72,7 +72,7 @@ Apart from the ROS environment, you will need to install the following dependenc

The `sensor_msgs/Image` [message type](https://docs.ros.org/en/api/sensor_msgs/html/msg/Image.html) is commonly used in ROS for representing image data. It contains fields for encoding, height, width, and pixel data, making it suitable for transmitting images captured by cameras or other sensors. Image messages are widely used in robotic applications for tasks such as visual perception, object detection, and navigation.

<palign="center">

<imgwidth="100%"src="https://github.com/RizwanMunawar/RizwanMunawar/assets/62513924/652cb3e8-ecb0-45cf-9ce1-a514dc06c605"alt="Detection and Segmentation in ROS Gazebo">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/detection-segmentation-ros-gazebo.avif"alt="Detection and Segmentation in ROS Gazebo">

</p>

### Image Step-by-Step Usage

@ -345,7 +345,7 @@ while True:

## Use Ultralytics with ROS `sensor_msgs/PointCloud2`

<palign="center">

<imgwidth="100%"src="https://github.com/RizwanMunawar/RizwanMunawar/assets/62513924/ef2e1ed9-a840-499a-b324-574bd26c3bc7"alt="Detection and Segmentation in ROS Gazebo">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/detection-segmentation-ros-gazebo-1.avif"alt="Detection and Segmentation in ROS Gazebo">

</p>

The `sensor_msgs/PointCloud2` [message type](https://docs.ros.org/en/api/sensor_msgs/html/msg/PointCloud2.html) is a data structure used in ROS to represent 3D point cloud data. This message type is integral to robotic applications, enabling tasks such as 3D mapping, object recognition, and localization.

@ -510,7 +510,7 @@ for index, class_id in enumerate(classes):

```

<palign="center">

<imgwidth="100%"src="https://github.com/ultralytics/ultralytics/assets/3855193/3caafc4a-0edd-4e5f-8dd1-37e30be70123"alt="Point Cloud Segmentation with Ultralytics ">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/point-cloud-segmentation-ultralytics.avif"alt="Point Cloud Segmentation with Ultralytics ">

Welcome to the Ultralytics documentation on how to use YOLOv8 with [SAHI](https://github.com/obss/sahi) (Slicing Aided Hyper Inference). This comprehensive guide aims to furnish you with all the essential knowledge you'll need to implement SAHI alongside YOLOv8. We'll deep-dive into what SAHI is, why sliced inference is critical for large-scale applications, and how to integrate these functionalities with YOLOv8 for enhanced object detection performance.

@ -51,8 +51,8 @@ Sliced Inference refers to the practice of subdividing a large or high-resolutio

<th>YOLOv8 with SAHI</th>

</tr>

<tr>

<td><imgsrc="https://user-images.githubusercontent.com/26833433/266123241-260a9740-5998-4e9a-ad04-b39b7767e731.png"alt="YOLOv8 without SAHI"width="640"></td>

<td><imgsrc="https://user-images.githubusercontent.com/26833433/266123245-55f696ad-ec74-4e71-9155-c211d693bb69.png"alt="YOLOv8 with SAHI"width="640"></td>

<td><imgsrc="https://github.com/ultralytics/docs/releases/download/0/yolov8-without-sahi.avif"alt="YOLOv8 without SAHI"width="640"></td>

<td><imgsrc="https://github.com/ultralytics/docs/releases/download/0/yolov8-with-sahi.avif"alt="YOLOv8 with SAHI"width="640"></td>

The Security Alarm System Project utilizing Ultralytics YOLOv8 integrates advanced computer vision capabilities to enhance security measures. YOLOv8, developed by Ultralytics, provides real-time object detection, allowing the system to identify and respond to potential security threats promptly. This project offers several advantages:

@ -175,7 +175,7 @@ That's it! When you execute the code, you'll receive a single notification on yo

#### Email Received Sample

<imgwidth="256"src="https://github.com/RizwanMunawar/ultralytics/assets/62513924/db79ccc6-aabd-4566-a825-b34e679c90f9"alt="Email Received Sample">

<imgwidth="256"src="https://github.com/ultralytics/docs/releases/download/0/email-received-sample.avif"alt="Email Received Sample">

|  |  |

| Speed Estimation on Road using Ultralytics YOLOv8 | Speed Estimation on Bridge using Ultralytics YOLOv8 |

|  |  |

| Speed Estimation on Road using Ultralytics YOLOv8 |Speed Estimation on Bridge using Ultralytics YOLOv8 |

!!! Example "Speed Estimation using YOLOv8 Example"

Now that we know what to expect, let's dive right into the steps and get your project moving forward.

@ -71,7 +71,7 @@ Depending on the objective, you might choose to select the model first or after

Choosing between training from scratch or using transfer learning affects how you prepare your data. Training from scratch requires a diverse dataset to build the model's understanding from the ground up. Transfer learning, on the other hand, allows you to use a pre-trained model and adapt it with a smaller, more specific dataset. Also, choosing a specific model to train will determine how you need to prepare your data, such as resizing images or adding annotations, according to the model's specific requirements.

<palign="center">

<imgwidth="100%"src="https://miro.medium.com/v2/resize:fit:1330/format:webp/1*zCnoXfPVcdXizTmhL68Rlw.jpeg"alt="Training From Scratch Vs. Using Transfer Learning">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/training-from-scratch-vs-transfer-learning.avif"alt="Training From Scratch Vs. Using Transfer Learning">

</p>

Note: When choosing a model, consider its [deployment](./model-deployment-options.md) to ensure compatibility and performance. For example, lightweight models are ideal for edge computing due to their efficiency on resource-constrained devices. To learn more about the key points related to defining your project, read [our guide](./defining-project-goals.md) on defining your project's goals and selecting the right model.

@ -97,7 +97,7 @@ However, if you choose to collect images or take your own pictures, you'll need

- **Image Segmentation:** You'll label each pixel in the image according to the object it belongs to, creating detailed object boundaries.

<palign="center">

<imgwidth="100%"src="https://miro.medium.com/v2/resize:fit:1400/format:webp/0*VhpVAAJnvq5ZE_pv"alt="Different Types of Image Annotation">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/different-types-of-image-annotation.avif"alt="Different Types of Image Annotation">

</p>

[Data collection and annotation](./data-collection-and-annotation.md) can be a time-consuming manual effort. Annotation tools can help make this process easier. Here are some useful open annotation tools: [LabeI Studio](https://github.com/HumanSignal/label-studio), [CVAT](https://github.com/cvat-ai/cvat), and [Labelme](https://github.com/labelmeai/labelme).

@ -115,7 +115,7 @@ Here's how to split your data:

After splitting your data, you can perform data augmentation by applying transformations like rotating, scaling, and flipping images to artificially increase the size of your dataset. Data augmentation makes your model more robust to variations and improves its performance on unseen images.

<palign="center">

<imgwidth="100%"src="https://www.labellerr.com/blog/content/images/size/w2000/2022/11/banner-data-augmentation--1-.webp"alt="Examples of Data Augmentations">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/examples-of-data-augmentations.avif"alt="Examples of Data Augmentations">

</p>

Libraries like OpenCV, Albumentations, and TensorFlow offer flexible augmentation functions that you can use. Additionally, some libraries, such as Ultralytics, have [built-in augmentation settings](../modes/train.md) directly within its model training function, simplifying the process.

@ -123,7 +123,7 @@ Libraries like OpenCV, Albumentations, and TensorFlow offer flexible augmentatio

To understand your data better, you can use tools like [Matplotlib](https://matplotlib.org/) or [Seaborn](https://seaborn.pydata.org/) to visualize the images and analyze their distribution and characteristics. Visualizing your data helps identify patterns, anomalies, and the effectiveness of your augmentation techniques. You can also use [Ultralytics Explorer](../datasets/explorer/index.md), a tool for exploring computer vision datasets with semantic search, SQL queries, and vector similarity search.

<palign="center">

<imgwidth="100%"src="https://github.com/ultralytics/ultralytics/assets/15766192/feb1fe05-58c5-4173-a9ff-e611e3bba3d0"alt="The Ultralytics Explorer Tool">

<imgwidth="100%"src="https://github.com/ultralytics/docs/releases/download/0/explorer-dashboard-screenshot-1.avif"alt="The Ultralytics Explorer Tool">

</p>

By properly [understanding, splitting, and augmenting your data](./preprocessing_annotated_data.md), you can develop a well-trained, validated, and tested model that performs well in real-world applications.

@ -177,7 +177,7 @@ Once your model is deployed, it's important to continuously monitor its performa

Monitoring tools can help you track key performance indicators (KPIs) and detect anomalies or drops in accuracy. By monitoring the model, you can be aware of model drift, where the model's performance declines over time due to changes in the input data. Periodically retrain the model with updated data to maintain accuracy and relevance.

In addition to monitoring and maintenance, documentation is also key. Thoroughly document the entire process, including model architecture, training procedures, hyperparameters, data preprocessing steps, and any changes made during deployment and maintenance. Good documentation ensures reproducibility and makes future updates or troubleshooting easier. By effectively monitoring, maintaining, and documenting your model, you can ensure it remains accurate, reliable, and easy to manage over its lifecycle.

Streamlit makes it simple to build and deploy interactive web applications. Combining this with Ultralytics YOLOv8 allows for real-time object detection and analysis directly in your browser. YOLOv8 high accuracy and speed ensure seamless performance for live video streams, making it ideal for applications in security, retail, and beyond.

2. Install the `python-sixel` library in your virtual environment. This is a [fork](https://github.com/lubosz/python-sixel?tab=readme-ov-file) of the `PySixel` library, which is no longer maintained.

@ -93,7 +93,7 @@ The VSCode compatible protocols for viewing images using the integrated terminal

## Example Inference Results

<palign="center">

<imgwidth="800"src="https://github.com/ultralytics/ultralytics/assets/62214284/6743ab64-300d-4429-bdce-e246455f7b68"alt="View Image in Terminal">

<imgwidth="800"src="https://github.com/ultralytics/docs/releases/download/0/view-image-in-terminal.avif"alt="View Image in Terminal">

@ -7,7 +7,7 @@ keywords: YOLO, YOLOv8, troubleshooting, installation errors, model training, GP

# Troubleshooting Common YOLO Issues

<palign="center">

<imgwidth="800"src="https://user-images.githubusercontent.com/26833433/273067258-7c1b9aee-b4e8-43b5-befd-588d4f0bd361.png"alt="YOLO Common Issues Image">

<imgwidth="800"src="https://github.com/ultralytics/docs/releases/download/0/yolo-common-issues.avif"alt="YOLO Common Issues Image">

@ -13,7 +13,7 @@ Running YOLO models in a multi-threaded environment requires careful considerati

Python threads are a form of parallelism that allow your program to run multiple operations at once. However, Python's Global Interpreter Lock (GIL) means that only one thread can execute Python bytecode at a time.

<palign="center">

<imgwidth="800"src="https://user-images.githubusercontent.com/26833433/281418476-7f478570-fd77-4a40-bf3d-74b4db4d668c.png"alt="Single vs Multi-Thread Examples">

<imgwidth="800"src="https://github.com/ultralytics/docs/releases/download/0/single-vs-multi-thread-examples.avif"alt="Single vs Multi-Thread Examples">

</p>

While this sounds like a limitation, threads can still provide concurrency, especially for I/O-bound operations or when using operations that release the GIL, like those performed by YOLO's underlying C libraries.

@ -9,7 +9,7 @@ keywords: Ultralytics, YOLO, open-source, contribution, pull request, code of co

Welcome! We're thrilled that you're considering contributing to our [Ultralytics](https://ultralytics.com) [open-source](https://github.com/ultralytics) projects. Your involvement not only helps enhance the quality of our repositories but also benefits the entire community. This guide provides clear guidelines and best practices to help you get started.

@ -26,13 +26,13 @@ In order to train models using Ultralytics Cloud Training, you need to [upgrade]