Similar to what we already do in other test suites:

- Try cleaning up resources three times.

- If unsuccessful, don't fail the test and just log the error. The

cleanup script should be the one to deal with this.

ref b/282081851

Add a new binary that runs all core end2end tests in fuzzing mode.

In this mode FuzzingEventEngine is substituted for the default event

engine. This means that time is simulated, as is IO. The FEE gets

control of callback delays also.

In our tests the `Step()` function becomes, instead of a single call to

`completion_queue_next`, a series of calls to that function and

`FuzzingEventEngine::Tick`, driving forward the event loop until

progress can be made.

PR guide:

---

**New binaries**

`core_end2end_test_fuzzer` - the new fuzzer itself

`seed_end2end_corpus` - a tool that produces an interesting seed corpus

**Config changes for safe fuzzing**

The implementation tries to use the config fuzzing work we've previously

deployed in api_fuzzer to fuzz across experiments. Since some

experiments are far too experimental to be safe in such fuzzing (and

this will always be the case):

- a new flag is added to experiments to opt-out of this fuzzing

- a new hook is added to the config system to allow variables to

re-write their inputs before setting them during the fuzz

**Event manager/IO changes**

Changes are made to the event engine shims so that tcp_server_posix can

run with a non-FD carrying EventEngine. These are in my mind a bit

clunky, but they work and they're in code that we expect to delete in

the medium term, so I think overall the approach is good.

**Changes to time**

A small tweak is made to fix a bug initializing time for fuzzers in

time.cc - we were previously failing to initialize

`g_process_epoch_cycles`

**Changes to `Crash`**

A version that prints to stdio is added so that we can reliably print a

crash from the fuzzer.

**Changes to CqVerifier**

Hooks are added to allow the top level loop to hook the verification

functions with a function that steps time between CQ polls.

**Changes to end2end fixtures**

State machinery moves from the fixture to the test infra, to keep the

customizations for fuzzing or not in one place. This means that fixtures

are now just client/server factories, which is overall nice.

It did necessitate moving some bespoke machinery into

h2_ssl_cert_test.cc - this file is beginning to be problematic in

borrowing parts but not all of the e2e test machinery. Some future PR

needs to solve this.

A cq arg is added to the Make functions since the cq is now owned by the

test and not the fixture.

**Changes to test registration**

`TEST_P` is replaced by `CORE_END2END_TEST` and our own test registry is

used as a first depot for test information.

The gtest version of these tests: queries that registry to manually

register tests with gtest. This ultimately changes the name of our tests

again (I think for the last time) - the new names are shorter and more

readable, so I don't count this as a regression.

The fuzzer version of these tests: constructs a database of fuzzable

tests that it can consult to look up a particular suite/test/config

combination specified by the fuzzer to fuzz against. This gives us a

single fuzzer that can test all 3k-ish fuzzing ready tests and cross

polinate configuration between them.

**Changes to test config**

The zero size registry stuff was causing some problems with the event

engine feature macros, so instead I've removed those and used GTEST_SKIP

in the problematic tests. I think that's the approach we move towards in

the future.

**Which tests are included**

Configs that are compatible - those that do not do fd manipulation

directly (these are incompatible with FuzzingEventEngine), and those

that do not join threads on their shutdown path (as these are

incompatible with our cq wait methodology). Each we can talk about in

the future - fd manipulation would be a significant expansion of

FuzzingEventEngine, and is probably not worth it, however many uses of

background threads now should probably evolve to be EventEngine::Run

calls in the future, and then would be trivially enabled in the fuzzers.

Some tests currently fail in the fuzzing environment, a

`SKIP_IF_FUZZING` macro is used for these few to disable them if in the

fuzzing environment. We'll burn these down in the future.

**Changes to fuzzing_event_engine**

Changes are made to time: an exponential sweep forward is used now -

this catches small time precision things early, but makes decade long

timers (we have them) able to be used right now. In the future we'll

just skip time forward to the next scheduled timer, but that approach

doesn't yet work due to legacy timer system interactions.

Changes to port assignment: we ensure that ports are legal numbers

before assigning them via `grpc_pick_port_or_die`.

A race condition between time checking and io is fixed.

---------

Co-authored-by: ctiller <ctiller@users.noreply.github.com>

Resolve `TESTING_VERSION` to `dev-VERSION` when the job is initiated by

a user, and not the CI. Override this behavior with setting

`FORCE_TESTING_VERSION`.

This solves the problem with the manual job runs executed against a WIP

branch (f.e. a PR) overriding the tag of the CI-built image we use for

daily testing.

The `dev` and `dev-VERSION` "magic" values supported by the

`--testing_version` flag:

- `dev` and `dev-master` and treated as `master`: all

`config.version_gte` checks resolve to `True`.

- `dev-VERSION` is treated as `VERSION`: `dev-v1.55.x` is treated as

simply `v1.55.x`. We do this so that when manually running jobs for old

branches the feature skip check still works, and unsupported tests are

skipped.

This changes will take care of all langs/branches, no backports needed.

ref b/256845629

This makes the JSON API visible as part of the C-core API, but in the

`experimental` namespace. It will be used as part of various

experimental APIs that we will be introducing in the near future, such

as the audit logging API.

This file does not contain a shebang, and whenever I try and run it it

wedges my console into some weird state.

There's a .sh file with the same name that should be run instead. Remove

the executable bit of the thing we shouldn't run directly so we, like,

don't.

<!--

If you know who should review your pull request, please assign it to

that

person, otherwise the pull request would get assigned randomly.

If your pull request is for a specific language, please add the

appropriate

lang label.

-->

Previously the error message didn't provide much context, example:

```py

Traceback (most recent call last):

File "/tmpfs/tmp/tmp.BqlenMyXyk/grpc/tools/run_tests/xds_k8s_test_driver/tests/affinity_test.py", line 127, in test_affinity

self.assertLen(

AssertionError: [] has length of 0, expected 1.

```

ref b/279990584.

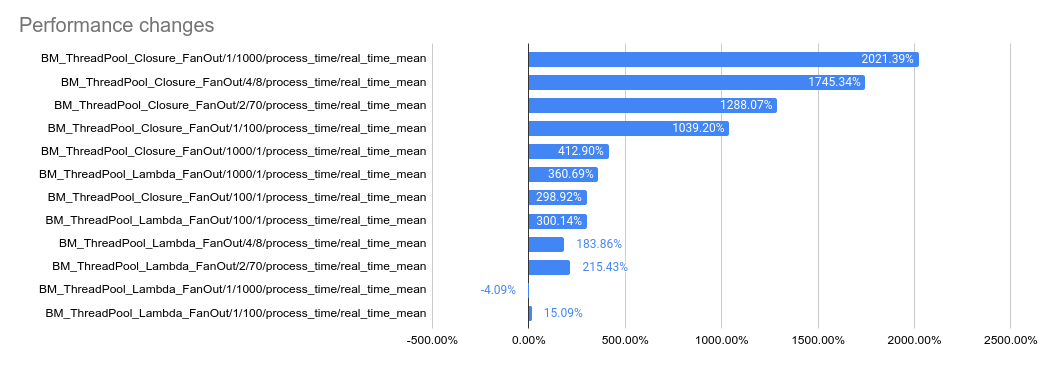

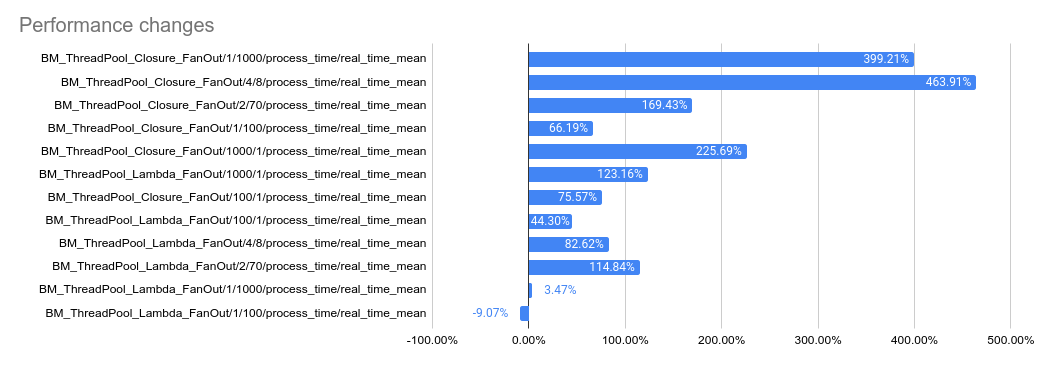

This PR implements a work-stealing thread pool for use inside

EventEngine implementations. Because of historical risks here, I've

guarded the new implementation behind an experiment flag:

`GRPC_EXPERIMENTS=work_stealing`. Current default behavior is the

original thread pool implementation.

Benchmarks look very promising:

```

bazel test \

--test_timeout=300 \

--config=opt -c opt \

--test_output=streamed \

--test_arg='--benchmark_format=csv' \

--test_arg='--benchmark_min_time=0.15' \

--test_arg='--benchmark_filter=_FanOut' \

--test_arg='--benchmark_repetitions=15' \

--test_arg='--benchmark_report_aggregates_only=true' \

test/cpp/microbenchmarks:bm_thread_pool

```

2023-05-04: `bm_thread_pool` benchmark results on my local machine (64

core ThreadRipper PRO 3995WX, 256GB memory), comparing this PR to

master:

2023-05-04: `bm_thread_pool` benchmark results in the Linux RBE

environment (unsure of machine configuration, likely small), comparing

this PR to master.

---------

Co-authored-by: drfloob <drfloob@users.noreply.github.com>

<!--

If you know who should review your pull request, please assign it to

that

person, otherwise the pull request would get assigned randomly.

If your pull request is for a specific language, please add the

appropriate

lang label.

-->

---------

Co-authored-by: Sergii Tkachenko <hi@sergii.org>

Reverts grpc/grpc#32924. This breaks the build again, unfortunately.

From `test/core/event_engine/cf:cf_engine_test`:

```

error: module .../grpc/test/core/event_engine/cf:cf_engine_test does not depend on a module exporting 'grpc/support/port_platform.h'

```

@sampajano I recommend looking into CI tests to catch iOS problems

before merging. We can enable EventEngine experiments in the CI

generally once this PR lands, but this broken test is not one of those

experiments. A normal build should have caught this.

cc @HannahShiSFB

The job run time was creeping to the 2h timeout. Let's bump it to 3h.

Note that this is `master` branch, so it also includes the build time

every time we commit to grpc/grpc.

ref b/280784903

Makes some awkward fixes to compression filter, call, connected channel

to hold the semantics we have upheld now in tests.

Once the fixes described here

https://github.com/grpc/grpc/blob/master/src/core/lib/channel/connected_channel.cc#L636

are in this gets a lot less ad-hoc, but that's likely going to be

post-landing promises client & server side.

We specifically need special handling for server side cancellation in

response to reads wrt the inproc transport - which doesn't track

cancellation thoroughly enough itself.

<!--

If you know who should review your pull request, please assign it to

that

person, otherwise the pull request would get assigned randomly.

If your pull request is for a specific language, please add the

appropriate

lang label.

-->

---------

Co-authored-by: ctiller <ctiller@users.noreply.github.com>

<!--

If you know who should review your pull request, please assign it to

that

person, otherwise the pull request would get assigned randomly.

If your pull request is for a specific language, please add the

appropriate

lang label.

-->

1. `GrpcAuthorizationEngine` creates the logger from the given config in

its ctor.

2. `Evaluate()` invokes audit logging when needed.

---------

Co-authored-by: rockspore <rockspore@users.noreply.github.com>

<!--

If you know who should review your pull request, please assign it to

that

person, otherwise the pull request would get assigned randomly.

If your pull request is for a specific language, please add the

appropriate

lang label.

-->

<!--

If you know who should review your pull request, please assign it to

that

person, otherwise the pull request would get assigned randomly.

If your pull request is for a specific language, please add the

appropriate

lang label.

-->

- Add a new docker image "rbe_ubuntu2004" that is built in a way that's

analogous to how our other testing docker images are built (this gives

us control over what exactly is contained in the docker image and

ability to fine-tune our RBE configuration)

- Switch RBE on linux to the new image (which gives us ubuntu20.04-based

builds)

For some reason, RBE seems to have trouble pulling the docker image from

Google Artifact Registry (GAR), which is where our public testing images

normally live, so for now, I used a workaround and I upload a copy of

the rbe_ubuntu2004 docker image to GCR as well, and that makes RBE works

just fine (see comment in the `renerate_linux_rbe_configs.sh` script).

More followup items (config cleanup, getting local sanitizer builds

working etc.) are in go/grpc-rbe-tech-debt-2023

<!--

If you know who should review your pull request, please assign it to

that

person, otherwise the pull request would get assigned randomly.

If your pull request is for a specific language, please add the

appropriate

lang label.

-->

- Fix broken `bin/run_channelz.py` helper

- Create `bin/run_ping_pong.py` helper that runs the baseline (aka

"ping_pong") test against preconfigured infra

- Setup automatic port forwarding when running `bin/run_channelz.py` and

`bin/run_ping_pong.py`

- Create `bin/cleanup_cluster.sh` helper to wipe xds out resources based

namespaces present on the cluster

Note: this involves a small change to the non-helper code, but it's just

moving a the part that makes XdsTestServer/XdsTestClient instance for a

given pod.

Audit logging APIs for both built-in loggers and third-party logger

implementations.

C++ uses using decls referring to C-Core APIs.

---------

Co-authored-by: rockspore <rockspore@users.noreply.github.com>

Third-party loggers will be added in subsequent PRs once the logger

factory APIs are available to validate the configs here.

This registry is used in `xds_http_rbac_filter.cc` to generate service

config json.

Fix at-head tests (this is a missing piece of

https://github.com/grpc/grpc/pull/32905) with the following error;

```

/var/local/git/grpc/tools/run_tests/helper_scripts/build_python.sh: line 126: python3.8: command not found

```

While a proper fix is on the way, this mitigates the number of

duplicated container logs in the xds test server/client pod logs.

The issue is that we only wait between stream restarts when an exception

is caught, which isn't always the reason the stream gets broken. Another

reason is the main container being shut down by k8s. In this situation,

we essentially do

```py

while True:

try:

restart_stream()

read_all_logs_from_pod_start()

except Exception:

logger.warning('error')

wait_seconds(1)

```

This PR makes it

```py

while True:

try:

restart_stream()

read_all_logs_from_pod_start()

except Exception:

logger.warning('error')

finally:

wait_seconds(5)

```

Oops I missed important changes from

https://github.com/grpc/grpc/pull/32712. And it turned out that there

are two problems that I couldn't fix at this point.

- Windows Bazel RBE Linker Error: This may be caused by how new Bazel 6

invokes build tools chain but it's not clear. I put workaround to use

Bazel 5 by using `OVERRIDE_BAZEL_VERSION=5.4.1`

- Rule `rules_pods` to fetch CronetFramework from CocoaPod has

incompatibility with sort of built-in apple toolchain.

(https://github.com/bazel-xcode/PodToBUILD/issues/232): I couldn't find

a workaround to fix this so I ended up disabling all tests depending

this target.

Fix `python_alpine` test failure with

```

fatal: detected dubious ownership in repository at '/var/local/jenkins/grpc'

To add an exception for this directory, call:

git config --global --add safe.directory /var/local/jenkins/grpc

```

This paves the way for making pick_first the universal leaf policy (see

#32692), which will be needed for the dualstack design. That change will

require changing pick_first to see both the raw connectivity state and

the health-checking connectivity state of a subchannel, so that we can

enable health checking when pick_first is used underneath round_robin

without actually changing the pick_first connectivity logic (currently,

pick_first always disables health checking). To make it possible to do

that, this PR moves the health checking code out of the subchannel and

into a separate API using the same data-watcher mechanism that was added

for ORCA OOB calls.

`tearDownClass` is not executed when `setUpClass` failed. In URL Map

test suite, this leads to a test client that failed to start not being

cleaned up.

This PR change the URL Map test suite to register a custom

`addClassCleanup` callback, instead of relying on the `tearDownClass`.

Unlike `tearDownClass`, cleanup callbacks are executed when the

`setUpClass` failed.

ref b/276761453

The PR also creates a separate BUILD target for:

- chttp2 context list

- iomgr buffer_list

- iomgr internal errqueue

This would allow the context list to be included as standalone

dependencies for EventEngine implementations.

As Protobuf is going to support Cord to reduce memory copy when

[de]serializing Cord fields, gRPC is going to leverage it. This

implementation is based on the internal one but it's slightly modified

to use the public APIs of Cord. only

Followup for https://github.com/grpc/grpc/pull/31141.

IWYU and clang-tidy have been "moved" to a separate kokoro job, but as

it turns out the sanity job still runs all of `[sanity, clang-tidy,

iwyu]`, which makes the grpc_sanity jobs very slow.

The issue is that grpc_sanity selects tasks that have "sanity" label on

them and as of now, clang-tidy and iwyu still do.

It can be verified by:

```

tools/run_tests/run_tests_matrix.py -f sanity --dry_run

Will run these tests:

run_tests_sanity_linux_dbg_native: "python3 tools/run_tests/run_tests.py --use_docker -t -j 2 -x run_tests/sanity_linux_dbg_native/sponge_log.xml --report_suite_name sanity_linux_dbg_native -l sanity -c dbg --iomgr_platform native --report_multi_target"

run_tests_clang-tidy_linux_dbg_native: "python3 tools/run_tests/run_tests.py --use_docker -t -j 2 -x run_tests/clang-tidy_linux_dbg_native/sponge_log.xml --report_suite_name clang-tidy_linux_dbg_native -l clang-tidy -c dbg --iomgr_platform native --report_multi_target"

run_tests_iwyu_linux_dbg_native: "python3 tools/run_tests/run_tests.py --use_docker -t -j 2 -x run_tests/iwyu_linux_dbg_native/sponge_log.xml --report_suite_name iwyu_linux_dbg_native -l iwyu -c dbg --iomgr_platform native --report_multi_target"

```

This PR should fix this (be removing the umbrella "sanity" label from

clang-tidy and iwyu)

<!--

If you know who should review your pull request, please assign it to

that

person, otherwise the pull request would get assigned randomly.

If your pull request is for a specific language, please add the

appropriate

lang label.

-->

@sampajano

Previously, we didn't configure the failureThreshold, so it used its

default value. The final `startupProbe` looked like this:

```json

{

"startupProbe": {

"failureThreshold": 3,

"periodSeconds": 3,

"successThreshold": 1,

"tcpSocket": {

"port": 8081

},

"timeoutSeconds": 1

}

```

Because of it, the total time before k8s killed the container was 3

times `failureThreshold` * 3 seconds wait between probes `periodSeconds`

= 9 seconds total (±3 seconds waiting for the probe response).

This greatly affected PSM Security test server, some implementations of

which waited for the ADS stream to be configured before starting

listening on the maintenance port. This lead for the server container

being killed for ~7 times before a successful startup:

```

15:55:08.875586 "Killing container with a grace period"

15:53:38.875812 "Killing container with a grace period"

15:52:47.875752 "Killing container with a grace period"

15:52:38.874696 "Killing container with a grace period"

15:52:14.874491 "Killing container with a grace period"

15:52:05.875400 "Killing container with a grace period"

15:51:56.876138 "Killing container with a grace period"

```

These extra delays lead to PSM security tests timing out.

ref b/277336725